GI VR/AR 2006

Augmenting a Laser Pointer with a Diffraction Grating for Monoscopic 6DOF Detection

First published and presented at the 3. Workshop Virtuelle und Erweiterte

Realität der GI-Fachgruppe VR/AR 2006,

extended and revised for JVRB.

urn:nbn:de:0009-6-12754

Abstract

This article illustrates the detection of 6 degrees of freedom (DOF) for Virtual Environment interactions using a modified simple laser pointer device and a camera. The laser pointer is combined with a diffraction grating to project a unique laser grid onto the projection planes used in projection-based immersive VR setups. The distortion of the projected grid is used to calculate the translational and rotational degrees of freedom required for human-computer interaction purposes.

Keywords: laser pointer, diffraction grating, 6DOF detection, Virtual Environment interaction

Subjects: Virtual Reality, Human Computer Interaction

Interaction in Virtual Environments requires information about position and orientation of specific interaction tools or body parts of users. This information is commonly provided by various tracking devices based on mechanical, electromagnetic, ultra-sonic, inertial, gyroscopic, or optical principles.

Besides qualitative aspects of resolution, data-rate and accuracy, these devices in general differ in their hardware setup which includes the control behavior (active or passive) and the use of active or passive markers or sensors.

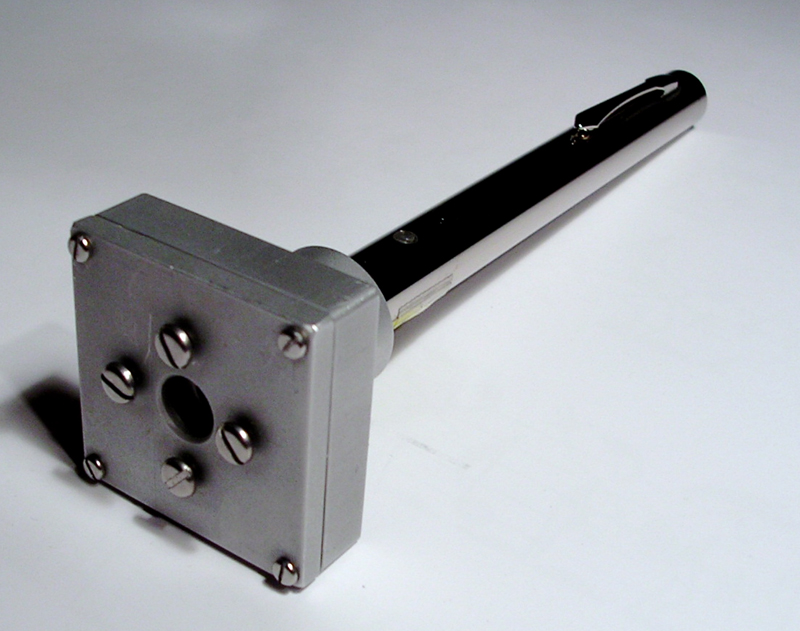

Figure 1. Prototype of the final device. The large cubical head in the lower left of the picture contains the diffraction grating. Four screws around the rays exit allow for fain grained adjustment of the gratings orientation. A detailed schema of the custom-built mount is shown in Figure 3.

For example, several successful optical tracking systems nowadays use reflective infrared (IR) markers lit by IR-LEDs that are captured by IR-cameras to track uniquely arranged sensor targets. An alternative approach uses active sensors which emit light and save the need of an additional lighting device but require additional power sources, mounts and/or cabling.

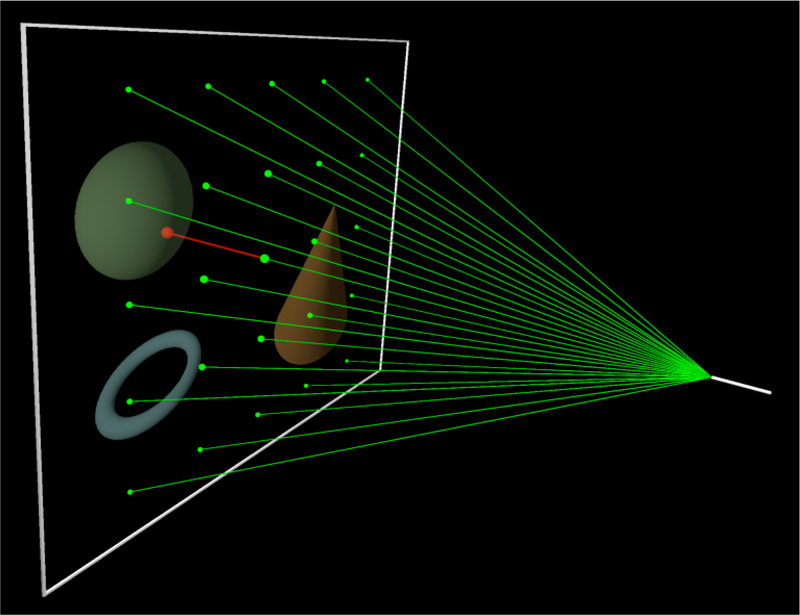

Figure 2. Illustration of the principle idea. The modified laser pointer (right) projects a regular grid of points on the projection surface (left) using a cross diffraction grating. The projected grid's distortion is measured using a simple camera monitoring the projection plane from behind. In combination with the laser's wavelength and diffraction grating's specification, i.e., its angle of divergence, 5 absolute and 1 relative DOF-rotation around projection plane's normal-can be derived.

Here, laser pointers present a lightweight and low-cost solution for simple interaction purposes where full body captures are not required and simple tool based operations like select and drag-and-drop are sufficient. Unfortunately, common laser pointers only provide 2DOF as their emitted laser beam intersects a plane generating a single laser point. In contrast to 2DOF, Virtual Environments strongly depend on depth as an additional degree of freedom necessary to provide believable three dimensional sensations to users. This results in the requirement of 6DOF since 3D motions include not only translational movements in 3 dimensions along the principles axes (3DOF - forward/backward, up/down, left/right) but also rotation about these axes (3DOF - yaw, pitch, roll).

In this article, we illustrate a modified laser pointer capable of providing 6DOF by attaching a diffraction grating in front of its ray exit while preserving all the favorable lightweight and low-cost properties. The prototype of the final device is shown in Figure 1 . The 6DOF are measured by analyzing the distorted projection of the diffraction pattern on the VR-system's projection surface as illustrated in the principle setup in Figure 2 .

Several approaches use custom-built laser devices that project more than one laser spot on one or more projection screens to provide additional DOF. Matveyev [ MG03 ] proposes a multiple-point technique with an infrared laser-based interaction device. It projects three infrared laser spots on a projection screen using one primary and two auxiliary laser-beams. The first auxiliary beam has a fixed angle of divergence, whereas the other can change its deviation angle mechanically relative to the primary beam. With this technique the interaction device has 5DOF, two for movement in the x- and y- direction, one for rotation in the x,y-plane, one for the shift along the z-axis and one for mouse emulation purposes.

The Hedgehog, proposed by Vorozcovs et. al [ VHS05 ], provides 6DOF in a fully-enclosed VR display. The device consists of 17 laser diodes with 645nm wavelength in a symmetrical hemispherical arrangement, where each laser diode is placed in a 45 degree angle from each other and is individually controlled by a PIC micro controller through a serial interface. The fully-enclosed VR display has at least one camera for each projection screen to track the laser spot positions produced by the Hedgehog. The proposed technique allows to determine the 6DOF with an angular resolution of 0.01 degrees RMS and position resolution of 0.2 mm RMS.

The approach proposed by Matveyev and Göbels [ MG03 ] approach has a few disadvantages:

-

The additional DOF decrease when the number of the three available laser spots on the projection screen drops.

-

The laser spot distance required for depth calculation has to be very small to allow a high interaction radius.

-

A high camera resolution and precise subpixel estimation is required.

Vorozcovs et. al [ VHS05 ] propose a technique allowing a highly-accurate detection of 6DOF at a reasonable update rate. However, this technique ideally requires multiple projection screens and a rather complex hardware installation.

The technique proposed in this work, illustrated in Figure 2 , involves a laser pointer based tracking system that determines 6DOF relative to a single projection based immersive VR-setup while overcoming some of the limitations found in [ MG03 ]. Specifically these are the reduced DOF (4 motion related DOF + mouse button) and the dependency on just 3 laser points and the vulnerability to lost points which is already critical for just one point lying outside the interaction area. In addition, the hardware costs for the laser pointer interaction device and the digital camera are very low in comparison with other optical tracking systems.

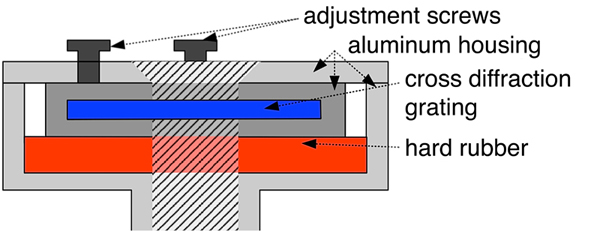

The augmented device consists primarily of three components: A customary green laser pointer, a cross diffraction grating and a custom-built mount. The custom-built mount allows for the attachment and adjustment of the cross diffraction grating orthogonal to the laser pointer. An overview of the custom-built mount is illustrated in Figure 3 . In combination the components project a regular laser point grid onto a planar screen, if the device is oriented perpendicularly.

The choice of the laser output power and the cross diffraction grating depends on the constraint, that either the whole or a part of the projected grid should utilize the entire projection area with a maximum number of projected laser points. In this case the complete resolution of the camera can be used to determine the 6DOF. Nevertheless the output power of the laser pointer still has to fulfill laser safety regulations. The physical parameters of the device, such as the laser output power and the wavelength determine the characteristics of the projected grid, where the intensity decrease gaussian-shaped from the center order to higher orders. The laser pointer's wavelength determines the angle of divergence between the laser beams as well as the size of the projected laser point grid on the projection screen.

For our augmented device we are using a holographic cross diffraction grating, commercially used for entertainment purposes such as laser shows. This grating has a groove period of approximately 6055.77 nm and a groove density of 165.1 grooves per millimeter. In combination with a laser pointer of 532 nm wavelength, the angle of divergence between the laser beams of zero and first order is approximately 5.03 degrees. This green laser pointer has been chosen, because there exists a close dependency between the emitting device (the laser device) and the sensor (the camera). In our case a 1CCD-Chip bayer camera is used for its greater sensitivity for the color green, as it contains two times more green than red and blue pixels. For laser safety reasons the output power of the augmented laser pointer is lower than 1 mW. It is possible to use a laser pointer with an output power of 4 mW, because the cross diffraction grating diminishes the output power in the zero order to a quarter of its former value. To maintain the immersion of the VR setup, it is possible to use an IR laser pointer in conjunction with an IR-camera.

To mount the cross diffraction grating on the laser pointer a special mount has been developed. Figure 3 shows the assembly of the grating mount.

Figure 3. Cross-sectional illustration of the custom-built grating mount. The diffraction grating is fixed between a layer of hard rubber on the one side and four adjustment screws at the opposite side all inside an aluminum housing. The housing has a cylindrical opening with two additional plastic adjustment screws at the bottom to mount the head to the standard laser pointer.

The mount consists of a separate aluminum housing with apertures for the laser beam to encapsulate the diffraction grating. This aluminum housing rests on a hard rubber layer and allows for adjustment of the encapsulated diffraction grating at two axes. The center cross of the projected grid may not be bent and can be calibrated in comparison with an orthogonal line cross on a sheet of paper.

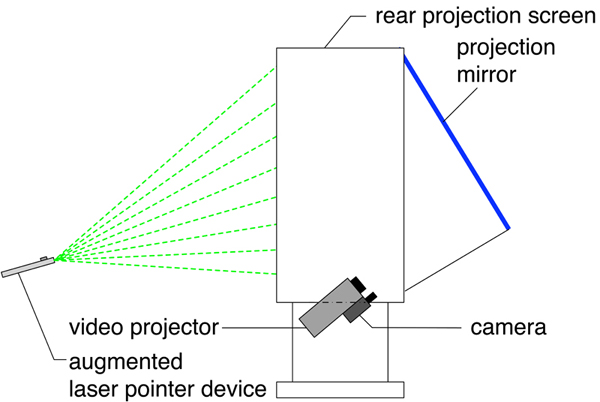

In order to detect the projected laser points, we are using a 1CCD-Chip digital firewire camera with a resolution of 640x480 and a framerate of 30 fps. The camera provides non-interpolated color images, and thus allows a more precise image processing. Figure 4 shows the camera setup for a rear projection screen. The camera is mounted below the video projector and observes the projection screen via the projection mirror. This position provides an ideal image of the projection screen with the minimum of perspective distortion (see [ OS02 ]).

Figure 4. Principle setup of the laser-pointer based tracking system. The camera is mounted as close as possible to the projectors lens to minimize distortions between the projected and the recorded picture. The optical path is deflected by a projection mirror to provide a compact configuration of the final display providing a much smaller footprint.

It is possible to install the camera on the viewers side, but this requires more complex image processing as the projection reaches maximum intensity seen from this position. Furthermore the camera position will constrain the viewers' movements to prevent occlusions.

The laser point detection in our implementation is based on a simple threshold operation to identify bright pixels in the color of the laser pointer wavelength. The subpixel-precise position is calculated by an intensity weighted sum of the pixels belonging to one laser spot as described in [ OS02 ]. In higher orders of the laser point grid the subpixel-precision decreases, because in that case the laser spot size corresponds to only one pixel of the camera image. For the calculation of the real 2D laser point position in projection screen coordinates, the radial and the perspective distortion have to be measured beforehand. The radial distortion parameters are determined by using a planar chessboard pattern. To calculate the perspective transformation, a projective planar transformation named homography is computed [ HZ03 ] that transforms the 2D undistorted (radial) camera image coordinates into projection screen coordinates. For this purpose four measured undistorted point coordinates in the camera image and their corresponding projection screen coordinates are determined. These four points are determined by projecting a standard laser pointer into the four corners of the projection screen and their subpixel-precise laser point detection according to [ VHS05 ]. Afterwards these coordinates are transformed in radial undistorted coordinates. The corresponding projection screen coordinates are measured manually. We have chosen the upper left corner of the projection screen as point of origin of the projection screen coordinate system.

To determine the 6DOF one has to calculate four basic steps:

-

Calculate the physical laser point coordinates of the projected grid, when the augmented device is oriented orthogonally to the projection screen.

-

Assign the detected laser point positions to a 2D order (m,n) of the grid model (see Section 3.5 ) .

-

Calculate the laser beam triangles for certain laser points (see Section 3.6 ).

-

Compute the 6DOF of the augmented device (see Section 3.7 ).

For the calculation of the projected grid laser point

coordinates, the laser pointer wavelength λ, the diffraction

grating groove period b, the distance to the projection

screen l and the 2D order positions (m,n), where

m,n ∈  , are needed. In general the order positions in

physics have positive values. For the calculation of

the laser point grid, order position values have been

extended to negative values, and represent the grid model

in this work. This allows the calculation of unique

positions for each order, where the zero order marks

the center of origin in the laser point grid coordinate

system.

, are needed. In general the order positions in

physics have positive values. For the calculation of

the laser point grid, order position values have been

extended to negative values, and represent the grid model

in this work. This allows the calculation of unique

positions for each order, where the zero order marks

the center of origin in the laser point grid coordinate

system.

The physical order position (x,y), where x,y, ∈  , can

be calculated by the following formulas, in which r

denotes the distance between the (0,0)-order and the

arbitrary order (m,n):

, can

be calculated by the following formulas, in which r

denotes the distance between the (0,0)-order and the

arbitrary order (m,n):

(1)

(1)

(2)

(2)

(3)

(3)

If n = 0 then:

(4)

(4)

(5)

(5)

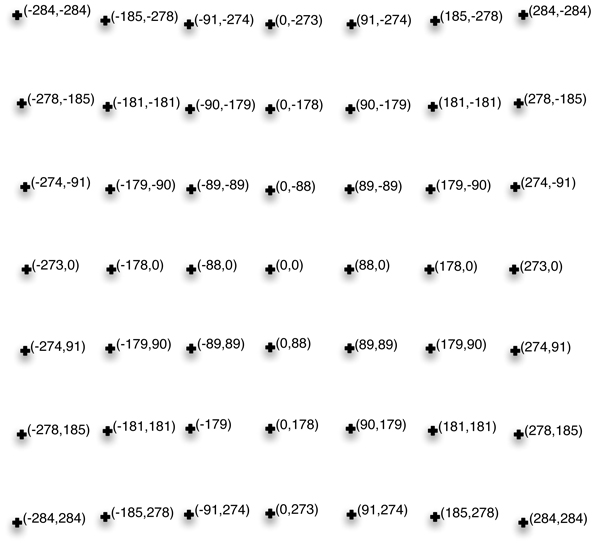

Figure 5 shows one example for the calculation of the projected laser point grid for the augmented device parameters (wavelength, groove period, distance). This illustrates that the projected laser points of adjacent orders in horizontal and vertical direction are not equidistant.

Figure 5. Calculation of the laser point positions for a 2D cross diffraction grating. λ = 532nm, b = 6055.77nm, l = 1000m (theoretical distance), Order (-3,-3) to (3,3)

The grid positions are the bases for calculating the angles of divergence between two orders, which in turn is required for the 6DOF determination.

The detected laser points have to be mapped to the 2D orders (m,n) of the grid model. This grid assignment is not unique because of the laser point grids rotational symmetry in the projection plane. Consequently, the mapping of the grid model changes with every quarter turn. For the complete grid assignment, the following steps are processed:

-

Assignment of the zero order (0,0) to the grid model, which can be identified through the laser point pixel size.

-

Determination and validation of the 3x3 neighborhood surrounding the zero order (0,0) and assignment to the grid model.

-

If the first 3x3 neighborhood is neither complete or correct, the determination and validation of the 3x3 neighborhood is processed on the previously found neighbors surrounding the zero order, until a valid neighborhood has been found.

-

Mapping of the orders based on the first assigned orders using the laser beam triangle calculation (see section (see Section 3.6).

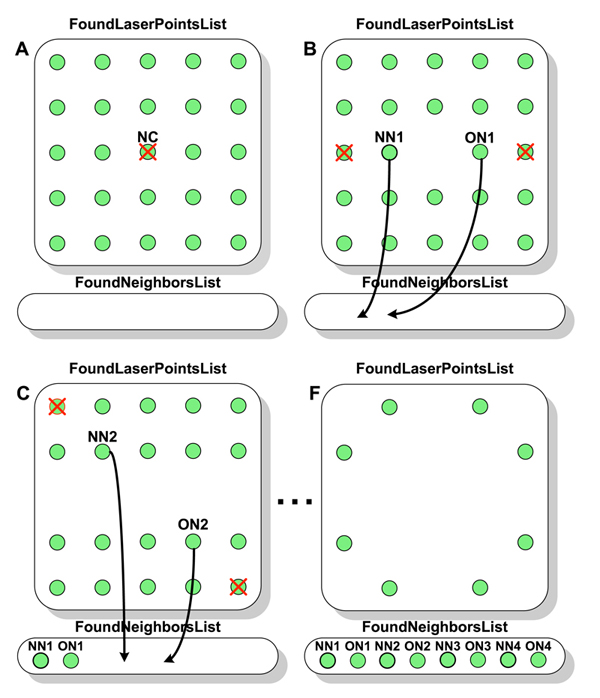

To find the 3x3 neighborhood of the order under examination, the search method illustrated in Figure 6 is applied using the following notation: NC is the center of the neighborhood, NN are the nearest neighbors, ON are the opposite neighbors, FoundLaserPointsList is the list of all detected laser points and FoundNeighborsList denotes the found neighbors. In the first step of the neighborhood search the examined order, in this case the center of the neighborhood NC is deleted from the FoundLaserPointsList (see Figure 6 A). Afterwards, the following steps are applied four times (see Figure 6 B-F):

-

Find the nearest laser point NN next to the examined order NC in the FoundLaserPointList.

-

Find the nearest laser point ON opposite to the previously found laser point NN relative to the examined order NC and add NN and ON to the FoundNeighborsList.

-

Delete all laser points from the FoundLaserPointsList, which approximately lie on the straight line with the two previously found laser points NN and ON.

The found laser points in the FoundNeighborsList are validated afterwards, by testing if they belong to a 4-sided polygon surrounding the examined order. The valid 3x3 neighborhood is assigned to the grid model. This first mapping builds the base for the assignment of the remaining laser points found and allows to predict the grid laser point positions by using the laser beam triangle calculation in section (see Section 3.6. These predicted positions are compared with the matching grid laser points and assigned to the grid model if they are approximately equal.

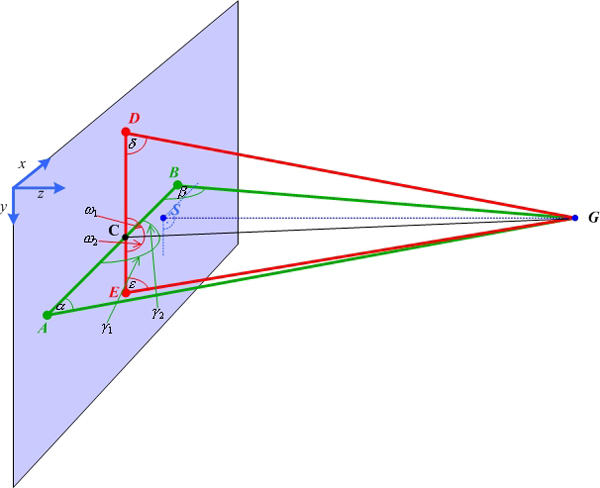

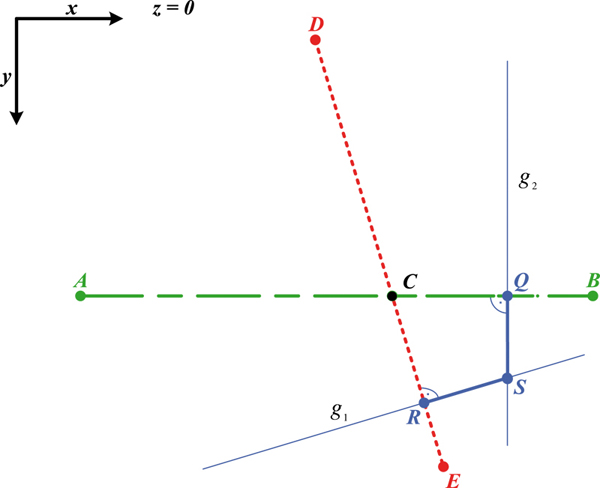

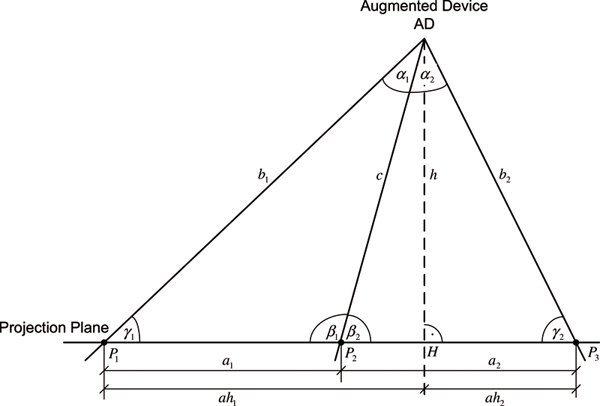

The grid laser points assigned to the grid model provide additional information needed to calculate the angles of divergence between two arbitrary orders. At least five laser point positions of the grid model are necessary to calculate the 6DOF. These five points have to lie on two straight lines, as each described by three laser point positions. These straight lines have to intersect in one point as shown in Figure 9 . Each straight line in conjunction with the position of the augmented device (AD) composes a triangle and will be denoted as laser beam triangle. Figure 7 shows such a laser beam triangle with the laser points P1, P2,,P3, the augmented device AD, the laser beam distances b1, c, b2 , the laser point distances a1, a2 , and the angles of divergence α1, α2 .

Figure 7. Side view of a laser beam triangle. Two (differing) triangles are required for the 6DOF calculation. The triangles are defined by the position of the augmented device (AD) and two points of the projected grid. (see also Figure 9 ). As an additional constraint, the two triangles must have a common intersection point.

All parameters of the laser beam triangle can be calculated by the distances a1 and a2 , and the known angles α1 and α2 . Some trigonometric relationships lead to following equation:

(6)

(6)

After the application of the law of cosine we get:

(7)

(7)

Equation 6 solved to b2 and substituted in equation 7 :

(8)

(8)

Solving the quadratic equation with the assumption that a1,a2 ∈ R+ and α1, α2 ∈ [0,90]

(9)

(9)

In a similar way the distance b2 can be calculated. The other parameters of the laser beam triangle γ1, γ2, β1, β2, c, h, ah1, ah2 can be computed by simple trigonometric relationships.

The computation of the 3D position and orientation of the augmented device is illustrated in Figure 8 and 9 . In this figures the five projection laser points are denoted as A(ax|ay|0),B(bx|by|0), C(cx|cy|0), D(dx,|dy|0), E(ex|ey|0).

The points Q(qx|qy|0), R(rx|ry|0) denote the

perpendicular bases from the augmented device position

G(gx|gy|0) to the straight lines

and

and

. With the

five laser point positions the distances |

. With the

five laser point positions the distances | |, |

|, | | and |

| and |

|, |

|, | | can be determined. The angles of divergence

∢ (DGC), ∢ (CGE), ∢ (ACG), ∢ (CGB) can be

computed from the known 2D-orders (m,n) of the laser

points. This information allows the calculations of the

laser beam triangles (AGB) and (DGE). To get the

perpendicular base S(sx|sy|0) from G to the projection

plane, the intersection of following linear equations is

computed:

| can be determined. The angles of divergence

∢ (DGC), ∢ (CGE), ∢ (ACG), ∢ (CGB) can be

computed from the known 2D-orders (m,n) of the laser

points. This information allows the calculations of the

laser beam triangles (AGB) and (DGE). To get the

perpendicular base S(sx|sy|0) from G to the projection

plane, the intersection of following linear equations is

computed:

(10)

(10)

(11)

(11)

and

and

(12)

(12)

So the intersection point S can be computed:

(13)

(13)

The x and y coordinates of the augmented device are

given by sx

and sy

, where the z coordinate is given by the

length | |:

|:

(14)

(14)

The 3D position of the augmented device G is:

(15)

(15)

The direction vector of the pointing beam

is given

by:

is given

by:

(16)

(16)

With

and

and

the determination of five DOF

are uniquely done. The last DOF is approximated by

the rotation angle between the straight line of the

(m,0)-orders and the x-axis. Because of the rotation

symmetry, one can only determine the rotation angle

between 0 and 90 degree. Higher rotation angles can be

computed by continuous tracking of the laser point grid

rotation.

the determination of five DOF

are uniquely done. The last DOF is approximated by

the rotation angle between the straight line of the

(m,0)-orders and the x-axis. Because of the rotation

symmetry, one can only determine the rotation angle

between 0 and 90 degree. Higher rotation angles can be

computed by continuous tracking of the laser point grid

rotation.

We have presented an inexpensive new active optical tracking technique, to determine 6DOF relative to a single projection screen. The device's 6DOF (five absolute and one relative) provide interaction with a virtual environment in an intuitively direct manner.

First tests reveal that the accuracy of the 6DOF determination decreases stepwise with the number of detected laser points belonging to one laser beam triangle. To improve the accuracy, a grayscale firewire camera with a higher resolution could enhance the subpixel-precise laser point detection. Furthermore, a laser pointer with a higher laser output power, and a diffraction grating with a smaller groove period to increase the angle of divergence between the orders could also improve the laser point detection. Our current device uses a 0.5mW laser source. German regulations define a maximum power of 1mW (per laser point) which would allow a 4mW laser source resulting in a 1mW intensity at the central order.

We have found that the reflection of the laser spots on the projection surface in the viewer direction are slightly noticeable and may result in reduced immersion. Since the overall setup uses a rather small portable one screen projection device, this factor is negligible in favor of the easy setup and utilization of the interaction device. An alternative is proposed in the concurrently developed sceptre device [ WNG06 ] which uses infrared laser light instead.

To support multiple devices more than one grayscale camera with wavelength bandpass filters and laser pointers with different wavelengths can be used. Another alternative is a time-division multiplexing technique proposed by Pavlovych and Stuerzlinger [ PS04 ]. The initial prototype does not utilize any post processing, i.e., filtering of the calculated 6DOF data. Hence, there is little but noticeable jitter. Subjectively, that does not interfere with the interaction. Still, upcoming work in that area would require a comprehensive usability test, possibly followed by technical refinements. For example, a standard post-processing step would be to use a Kalmanfilter [ Kal60 ] for the 6DOF determination to smooth the 6DOF position computation.

[BL06] Monoscopic 6DOF Detection using a Laser Pointer, Virtuelle und Erweiterte Realität, 3. Workshop of the GI special interest group VR/AR, 2006, S. Müller and G. Zachmann (Eds.), pp. 143—154, isbn 978-3-8322-6367-6.

[HZ03] Multiple View Geometry in Computer Vision, Cambridge University Press, 2003, Second Edition, isbn 0-521-54051-8.

[Kal60] A New Approach to Linear Filtering and Prediction Problems, Transactions of the ASME--Journal of Basic Engineering, (1960), Series D, 35—45.

[MG03] The optical tweezers: multiple-point interaction technique, VRST '03: Proceedings of the ACM symposium on Virtual reality software and technology, Osaka, Japan, 2003, ACM Press, New York, NY, USA, pp. 184—187, isbn 1-58113-569-6.

[OS02] Laser Pointers as Collaborative Pointing Devices, Proceedings of the Graphics Interfaces 2002, May 2002, AK Peters, Ltd., Michael McCool (Ed.), pp. 141—150, isbn 1-56881-183-7.

[PS04] Laser Pointers as interaction devices for collaborative pervasive computing, Advances in Pervasive Computing: a collection of contributions presented at PERVASIVE 2004, Alois Ferscha, Horst Hörtner, and Gabriele Kotsis (Eds.), OCG , April 2004, pp. 315—320, isbn 3-8540-3176-9.

[VHS05] The Hedgehog: A Novel Optical Tracking Method for Spatially Immersive Displays, Virtual Reality, 2005 IEEE, 2005, pp. 83—89, isbn 0-7803-8929-8.

[WNG06] Sceptre - An infrared laser tracking system for Virtual Environments, Proceedings of the ACM symposium on Virtual Reality software and technology VRST 2006, 2006, pp. 45—50, isbn 1-59593-321-2.

Fulltext ¶

-

Volltext als PDF

(

Size

1.3 MB

)

Volltext als PDF

(

Size

1.3 MB

)

License ¶

Any party may pass on this Work by electronic means and make it available for download under the terms and conditions of the Digital Peer Publishing License. The text of the license may be accessed and retrieved at http://www.dipp.nrw.de/lizenzen/dppl/dppl/DPPL_v2_en_06-2004.html.

Recommended citation ¶

Marc Erich Latoschik, and Elmar Bomberg, Augmenting a Laser Pointer with a Diffraction Grating for Monoscopic 6DOF Detection. JVRB - Journal of Virtual Reality and Broadcasting, 4(2007), no. 14. (urn:nbn:de:0009-6-12754)

Please provide the exact URL and date of your last visit when citing this article.