VRIC 2008

Real Walking through Virtual Environments

by Redirection Techniques

urn:nbn:de:0009-6-17472

Abstract

We present redirection techniques that support exploration of large-scale virtual environments (VEs) by means of real walking. We quantify to what degree users can unknowingly be redirected in order to guide them through VEs in which virtual paths differ from the physical paths. We further introduce the concept of dynamic passive haptics by which any number of virtual objects can be mapped to real physical proxy props having similar haptic properties (i. e., size, shape, and surface structure), such that the user can sense these virtual objects by touching their real world counterparts. Dynamic passive haptics provides the user with the illusion of interacting with a desired virtual object by redirecting her to the corresponding proxy prop. We describe the concepts of generic redirected walking and dynamic passive haptics and present experiments in which we have evaluated these concepts. Furthermore, we discuss implications that have been derived from a user study, and we present approaches that derive physical paths which may vary from the virtual counterparts.

Keywords: Virtual Locomotion User Interfaces, Redirected Walking, Perception

Subjects: Perception, Virtual Environment, Locomotion

Walking is the most basic and intuitive way of moving within the real world. Taking advantage of such an active and dynamic ability to navigate through large immersive virtual environments (IVEs) is of great interest for many 3D applications demanding locomotion, such as urban planning, tourism, 3D games etc. Although these domains are inherently three-dimensional and their applications would benefit from exploration by means of real walking, many such VE systems do not allow users to walk in a natural manner.

In many existing immersive VE systems, the user navigates with hand-based input devices in order to specify direction and speed as well as acceleration and deceleration of movements [ WCF05 ]. Most IVEs do not allow real walking [ WCF05 ]. Many domains are inherently three-dimensional and advanced visual simulations often provide a good sense of locomotion, but visual stimuli by itself can hardly address the vestibular-proprioceptive system. An obvious approach to support real walking through an IVE is to transfer the user's tracked head movements to changes of the virtual camera in the virtual world by means of a one-to-one mapping. This technique has the drawback that the users' movements are restricted by a rather limited range of the tracking sensors and a small workspace in the real world. Therefore concepts for virtual locomotion methods are needed that enable walking over large distances in the virtual world while remaining within a relatively small space in the real world. Various prototypes of interface devices have been developed to prevent a displacement in the real world. These devices include torus-shaped omni-directional treadmills [ BS02, BSH02 ], motion foot pads, robot tiles [ IHT06, IYFN05 ] and motion carpets [ STU07 ]. Although these hardware systems represent enormous technological achievements, they are still very expensive and will not be generally accessible in the foreseeable future. Hence there is a tremendous demand for more applicable approaches.

As a solution to this challenge, traveling by exploiting walk-like gestures has been proposed in many different variants, giving the user the impression of walking. For example, the walking-in-place approach exploits walk-like gestures to travel through an IVE, while the user remains physically at nearly the same position [ UAW99, Su07, WNM06, FWW08 ]. However, real walking has been shown to be a more presence-enhancing locomotion technique than other navigation metaphors [ UAW99 ].

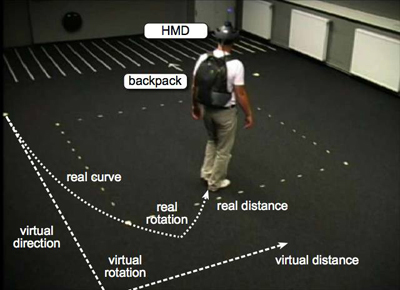

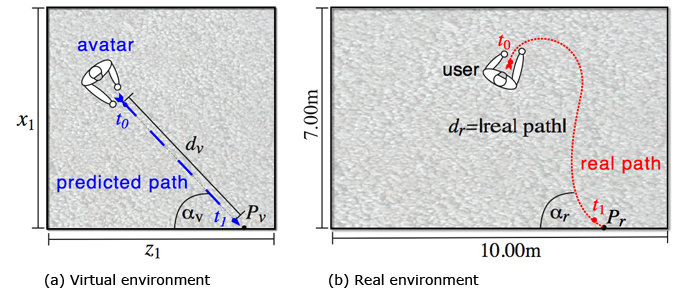

Cognition and perception research suggests that cost-efficient as well as natural alternatives exist. It is known from perception psychology that vision often dominates proprioceptive and vestibular sensation when different [ DB78, Ber00 ]. Human participants using only vision to judge their motion through a virtual scene can successfully estimate their momentary direction of self-motion but are not as good in perceiving their paths of travel [ LBvdB99, BIL00 ]. Therefore, since users tend to unwittingly compensate for small inconsistencies during walking, it is possible to guide them along paths in the real world that differ from the perceived path in the virtual world. This so-called redirected walking enables users to explore a virtual world that is considerably larger than the tracked working space [ Raz05 ] (see Figure 1).

In this article, we present an evaluation of redirected walking and derive implications for the design process of a virtual locomotion interface. For this evaluation, we extend redirected walking by generic aspects, i. e., support for translations, rotations and curvatures. We present a model which describes how humans move through virtual environments. We describe a user study that derives optimal parameterizations for these techniques.

Our virtual locomotion interface allows users to explore 3D environments by means of real walking in such a way that the user's presence is not disturbed by a rather small interaction space or physical objects present in the real environment. Our approach can be used in any fully-immersive VE-setup without a need for special hardware to support walking or haptics. For these reasons we believe that these techniques make exploration of VEs more natural and thus ubiquitously available.

The remainder of this paper is structured as follows. Section 2 summarizes related work. Section 3 describes our concepts of redirected walking and present the human locomotion triple-modeling how humans move through VEs. Section 4 presents a user study we conducted in order to quantify to what degree users can be redirected without the user noticing the discrepancy. Section 5 discusses implications for the design of a virtual locomotion interface based on the results of the previously mentioned study. Section 6 concludes the paper and gives an overview about future work.

Locomotion and perception in IVEs are the focus of many research groups analyzing perception in both the real world and virtual worlds. For example, researchers have found that distances in virtual worlds are underestimated in comparison to the real world [ LK03, IAR06, IKP07 ], that visual speed during walking is underestimated in VEs [ BSD05 ] and that the distance one has traveled is also underestimated [ FLKB07 ]. Sometimes, users have general difficulties in orienting themselves in virtual worlds [ RW07 ].

The real world remains stationary as we rotate our heads and we perceive the world to be stable even when the world's image moves on the retina. Visual and extra-retinal cues help us to perceive the world as stable [ Wal87, BvdHV94, Wer94 ]. Extraretinal cues come from the vestibular system, proprioception, our cognitive model of the world, and from an efference copy of the motor commands that move the respective body parts. When one or more of these cues conflicts with other cues, as is often the case for IVEs (due to incorrect field-of-view, tracking errors, latency, etc.), the virtual world may appear to be spatially unstable.

Experiments demonstrate that subjects tolerate a certain amount of inconsistency between visual and proprioceptive sensation [ SBRK08, JPSW08, PWF08, KBMF05, BRP05, Raz05 ]

Redirected walking [ Raz05 ] provides a promising solution to the problem of limited tracking space and the challenge of providing users with the ability to explore an IVE by walking. With this approach, the user is redirected via manipulations applied to the displayed scene, causing users to unknowingly compensate by repositioning and/or reorienting themselves.

Different approaches to redirect a user in an IVE have been suggested. An obvious approach is to scale translational movements, for example, to cover a virtual distance that is larger than the distance walked in the physical space. Interrante et al. suggest to apply the scaling exclusively to the main walking direction in order to prevent unintended lateral shifts [ IRA07 ]. With most reorientation techniques, the virtual world is imperceptibly rotated around the center of a stationary user until she is oriented in such a way that no physical obstacles are in front of her [ PWF08, Raz05, KBMF05 ]. Then, the user can continue to walk in the desired virtual direction. Alternatively, reorientation can also be applied while the user walks [ GNRH05, SBRK08, Raz05 ]. For instance, if the user wants to walk straight ahead for a long distance in the virtual world, small rotations of the camera redirect her to walk unconsciously on an arc in the opposite direction in the real world [ Raz05 ].

Redirection techniques are applied in robotics for controlling a remote robot by walking [ GNRH05 ]. For such scenarios much effort is required to avoid collisions and sophisticated path prediction is essential [ GNRH05, NHS04 ]. These techniques guide users on physical paths for which lengths as well as turning angles of the visually perceived paths are maintained, but the user observes the discrepancy between both worlds.

Until now not much research has been undertaken in order to identify thresholds which indicate the tolerable amount of deviation between vision and proprioception while the user is moving. Preliminary studies [ SBRK08, PWF08, Raz05 ] show that in general redirected walking works as long as the subjects are not focused on detecting the manipulation. In these experiments, subjects answered afterwards if they noticed a manipulation or not. Quantified analyzes have not been undertaken. Some work has been done in order to identify thresholds for detecting scene motion during head rotation [ Wal87, JPSW08 ], but walking was not considered in these experiments.

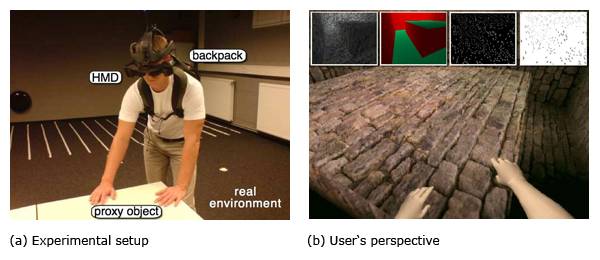

Besides natural navigation, multi-sensory perception of an IVE increases the user's sense of presence [ IMWB01 ]. Whereas graphics and sound rendering have matured so much that realistic synthesis of real world scenarios is possible, generation of haptic stimuli still represents a vast area for research. Tremendous effort has been undertaken to support active haptic feedback by specialized hardware which generates certain haptic stimuli [ CD05 ]. These technologies, such as force feedback devices, can provide a compelling sense of touch, but are expensive and limit the size of the user's working space due to devices and wires. A simpler solution is to use passive haptic feedback: physical props registered to virtual objects that provide real haptic feedback to the user. By touching such a prop the user gets the impression of interacting with an associated virtual object seen in an HMD [ Lin99 ] (see Figure 2). Passive haptic feedback is very compelling, but a different physical object is needed for each virtual object that shall provide haptic feedback [ Ins01 ]. Since the interaction space is constrained, only a few physical props can be supported. Moreover, the presence of physical props in the interaction space prevents exploration of other parts of the virtual world not represented by the current physical setup. For example, a proxy prop that represents a virtual object at some location in the VE may occlude the user when walking through a different location in the virtual world. Thus exploration of large scale environments and support of passive haptic feedback seem to be mutually exclusive.

Redirected walking and passive haptics have been combined in order to address these challenges [ KBMF05, SBRK08 ]. If the user approaches an object in the virtual world she is guided to a corresponding physical prop. Otherwise the user is guided around obstacles in the working space in order to avoid collisions. Props do not have to be aligned with their virtual world counterparts nor do they have to provide haptic feedback identical to the visual representation. Experiments have shown that physical objects can provide passive haptic feedback for virtual objects with a different visual appearance and with similar, but not necessarily the same, haptic capabilities, or the same alignment [ SBRK08, BRP05 ] (see Figure 2(a) and (b)). Hence, virtual objects can be sensed by means of real proxy props having similar haptic properties, i. e., size, shape and texture and location. The mapping from virtual to real objects need not be one-to-one. Since the mapping as well as the visualization of virtual objects can be changed dynamically during runtime, usually a small number of proxy props suffices to represent a much larger number of virtual objects. By redirecting the user to a preassigned proxy object, the user gets the illusion of interacting with a desired virtual object.

In summary, substantial efforts have been made to allow a user to walk through a IVE which is larger than the laboratory space and experience the environment with support of passive haptic feedback, but the concepts can be improved on.

Redirected walking can be implemented using gains which define how tracked real-world motions are mapped to the VE. These gains are specified with respect to a coordinate system. For example, they can be defined by uniform scaling factors that are applied to the virtual world registered with the tracking coordinate system such that all motions are scaled likewise.

We introduce the human locomotion triple (HLT) (s,u,w) by three normalized vectors— strafe vector s, up vector u and walk-direction vector w. w can be determined by the actual walking direction or using proprioceptive information such as the orientation of the limbs or the view direction. In our experiments we define w by the current tracked walking direction. The strafe vector s, a.k.a. right vector, is orthogonal to the direction of walk and parallel to the walk plane. We define u determining the up vector of the tracked head orientation. Whereas the direction of walk and the strafe vector are orthogonal to each other, the up vector u is not constrained to the crossproduct of s and w. Hence, if a user walks up a slope the direction of walk is defined according to the walk plane's orientation, whereas the up vector is not orthogonal to this tilted plane. When walking on slopes humans tend to lean forward, so the up vector remains parallel to the direction of gravity. As long as the direction of walk holds w ≠ (0,1,0), the HLT composes a coordinates system. In the following sections we describe how gains can be applied to the HLT.

Figure 1. Redirected walking scenario: a user walks through the real environment on a different path with a different length in comparison to the perceived path in the virtual world.

The tracking system detects a change of the user's real

world position defined by the vector Treal = Pcur - Ppre

,

where Pcur

is the current position and Ppre

is the previous

position, Treal

is mapped one-to-one to the virtual camera

with respect to the registration between virtual scene

and tracking coordinates system. A translation gain

gT ∈ R3

is defined for each component of the HLT (see

Section 3.1) by the quotient of the mapped virtual

world translation Tvirtual

and the tracked real world

translation Treal

, i. e.,

. Hence, generic

gains for translational movements can be expressed by

gT[s], gT[u],gT[w], where each component is applied

to the corresponding vector s, u and w respectively

composing the translation. In our experiments we have

focussed on sensitivity to translation gains in the walk

direction gT[w].

. Hence, generic

gains for translational movements can be expressed by

gT[s], gT[u],gT[w], where each component is applied

to the corresponding vector s, u and w respectively

composing the translation. In our experiments we have

focussed on sensitivity to translation gains in the walk

direction gT[w].

Real-world head rotations can be specified

by a vector consisting of three angles, i. e.,

Rreal ≔ (pitchreal, yawreal, rollreal). The tracked orientation

change is applied to the virtual camera. Analogous to

Section 3.2, rotation gains are defined for each

component (pitch/yaw/roll) of the rotation and are applied

to the axes of the locomotion triple. A rotation gain gR

is

defined by the quotient of the considered component of a

virtual world rotation Rvirtual

and the real world rotation

Rreal

, i. e.,

. When a rotation gain gR

is

applied to a real world rotation α the virtual camera is rotated by gR ⋅ α instead of α. This means that if gR = 1

the virtual scene remains stable considering the head's

orientation change. In the case gR > 1 the virtual scene

appears to move against the direction of the head turn,

whereas a gain gR < 1 causes the scene to rotate in the

direction of the head turn. A system with no head

tracking would result in gR = 0. Rotational gains

can be expressed by gR[s], gR[u], gR[w], where the

gain gR[s] specified for pitch is applied to s, the gain

gR[u] specified for yaw is applied to u, and gR[w]

specified for roll is applied to w. In our experiments

we have focussed on rotation gains for yaw rotation

gR[u].

. When a rotation gain gR

is

applied to a real world rotation α the virtual camera is rotated by gR ⋅ α instead of α. This means that if gR = 1

the virtual scene remains stable considering the head's

orientation change. In the case gR > 1 the virtual scene

appears to move against the direction of the head turn,

whereas a gain gR < 1 causes the scene to rotate in the

direction of the head turn. A system with no head

tracking would result in gR = 0. Rotational gains

can be expressed by gR[s], gR[u], gR[w], where the

gain gR[s] specified for pitch is applied to s, the gain

gR[u] specified for yaw is applied to u, and gR[w]

specified for roll is applied to w. In our experiments

we have focussed on rotation gains for yaw rotation

gR[u].

Instead of multiplying gains with translations or rotations, offsets can be added to real world movements. Camera manipulations are used if only one kind of motion is tracked, for example, user turns the head, but stands still, or the user moves straight ahead without head rotations. If the injected manipulations are reasonably small, the user will unknowingly compensate for these offsets resulting in walking a curve.

The curvature gain GC

denotes the resulting bend of a

real path. For example, when the user moves straight

ahead, a curvature gain that causes reasonably small

iterative camera rotations to one side enforces the user to

walk along a curve in the opposite direction in order to

stay on a straight path in the virtual world. The curve

is determined by a segment of a circle with radius

r, and

. In case no curvature is applied it is

r = ∞ ⇒ gC = 0, whereas if the curvature causes the

user to rotate by 90° clockwise after

. In case no curvature is applied it is

r = ∞ ⇒ gC = 0, whereas if the curvature causes the

user to rotate by 90° clockwise after

the user

has covered a quarter circle with radius r = 1 ⇒ gC = 1.

the user

has covered a quarter circle with radius r = 1 ⇒ gC = 1.

Displacement gains specify the mapping from physical

head or body rotations to virtual translations. In some

cases it is useful to have a means to insert virtual

translations while the user turns the head or body but is

stationary at the same physical position. Hence, with

displacement gains translations are injected with respect to

rotational user movements, whereas with curvature gains

rotations can be injected corresponding to translational

user movements (see Section 3.4). Three displacement

gains are introduced: (gD[w],gD[s],gD[u]) ∈  3

, where

the components define the contribution of physical yaw,

pitch, and roll rotations to the virtual translational

displacement. For an active physical user rotation of

α ≔ (pitchreal, yawreal, rollreal), the virtual position is

translated in the direction of the virtual vectors w, s, and u

accordingly.

3

, where

the components define the contribution of physical yaw,

pitch, and roll rotations to the virtual translational

displacement. For an active physical user rotation of

α ≔ (pitchreal, yawreal, rollreal), the virtual position is

translated in the direction of the virtual vectors w, s, and u

accordingly.

Up to now only gains have been presented, that defined the mapping of real-world movements to VE motions. However, not all virtual camera rotations and translations have to be triggered by physical user actions. Time-dependant drifts are an example for this type of manipulation. They can be defined just like the other gains described above. The idea behind these gains is to introduce changes to the virtual camera orientation or position over time. The time-dependant rotation gains are ratios in degrees/sec and the corresponding translation gains are ratios in m/sec. Time-dependant gains are given by gX = (gX[s],gX[u],gX[w]), where X is replaced by T/t for time-dependent translation gains and R/t for time-dependent rotation gains.

Figure 2. Combining redirection techniques and dynamic passive haptics. (a) A user touches a table serving as proxy object for (b) a stone block displayed in the virtual world. (a) Experimental setup, (b) User's perspective

In this section we present three sub-experiments in which we quantify how much humans can unknowingly be redirected. We analyze the appliance of translation gT[w], rotation gR[u], and curvature gains gC[w].

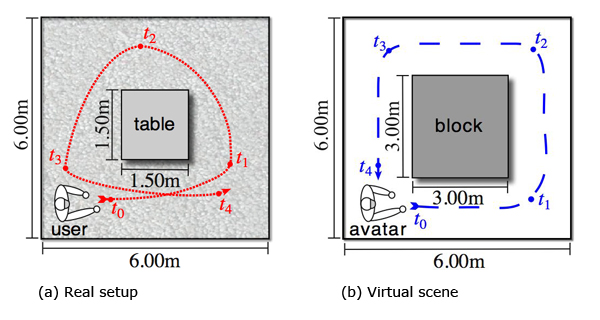

Users were restricted to a 10m x 7m x 2.5m tracking range. The user's path always led her clockwise or counterclockwise around a 3m x 3m squared area which is represented as virtual block in the VE (see Figure 2 (a), 2 (b) and 3). The visual representation of the virtual environment can be changed continuously between different levels of realism (see Figure 2(b) (insets)).

A total of 8 (7 male and 1 female) subjects participated in the study. Three of them had previous experience with walking in VEs using an HMD setup. We arranged them into three groups:

-

Three expert users (EU Group) (two of which were authors) who knew about the objectives and the procedure before the study

-

Three aware users (AU Group) who knew that we would manipulate them, but had no knowledge about how the manipulation would be performed.

-

Two naive users (NU Group) had no knowledge about the goals of the experiment and thought they had to report any kind of tracking problems.

The EU and AU group were asked to report if and how they realized any discrepancy between actions performed in the real world and the corresponding transfer in the virtual world. The entire experiment took over 1.5 hours hours (including pre-tests and post-questionnaires) for each subject. Subjects could take breaks at any time.

We used an Intel computer (host) with dual-core processors, 4 GB of main memory and an nVidia GeForce 8800 for system control and logging purposes. The participants were equipped with a HMD backpack consisting of a laptop PC (slave) with a GeForce 7700 Go graphics card and battery power for at least 60 minutes (see Figure 1). The scene (illustrated in Figure 2(a)) was rendered using DirectX and our own software with which the system maintained consistently a frame rate of 30 frames per second The VE was displayed on two different head-mounted display (HMD) setups: (1) a ProView SR80 HMD with a resolution of 1240 x 1024 and a large diagonal optical field of view (FoV) of 80°, and (2) an eMagin Z800 HMD having a resolution of 800 x 600 with a smaller diagonal FoV of 45°. During the experiment the room was entirely darkened in order to reduce the user's perception of the real world.

We used the WorldViz Precise Position Tracker, an active optical tracking system which provides sub-millimeter precision and sub-centimeter accuracy. With our setup the position of one active infrared marker can be tracked within an area of approximately 10m x 7m x 2.5m. The update rate of this tracking system is approximately 60 Hz, providing real-time positional data of the active markers. The positions of the markers are sent via Wifi to the laptop. For the evaluation we attached a marker to the HMD, but we also tracked hands and feet of the user. Since the marker on the HMD provides no orientation data, we used an Intersense InertiaCube2 orientation tracker that provides full 360° tracking range along each axis in space and achieves an update rate of 180Hz. The InertiaCube2 is attached to the HMD and connected to the laptop in the backpack of the user.

The laptop on the back of the user are equipped with wireless LAN adapters. We used a dual-sided communication: data from the InertiaCube2 and the tracking system is sent to the host computer where the observer experimenter logs all streams and oversees the experiment. In order to apply generic redirected walking the experimenter can send corresponding control inputs to the laptop. The entire weight of the backpack is about 8 kg which is quite heavy. However, no wires disturb the immersion. No assistant must walk beside the user to keep an eye on the wires. Sensing the wires would give the participant a cue to orient physically, an issue we had to avoid in our study. The user and the experimenter communicated via a dual head set system only. Speakers in the head set provided ambient noise such that orientation by means of real-world auditory feedback was not possible for the user.

We performed preliminary interviews and distance/orientation perception tests with the participants to reveal their spatial cognition and body awareness. For instance the users had to perform simple distance estimation tests. After reviewing several distances ranging from 3m to 10m the subjects had to walk along blindfolded until they estimated that the distance seen before has been reached. Furthermore they had to rotate by specific angles ranging from 45° to 270° and rotate back blindfolded. One objective of the study was to draw conclusions if and how body awareness may affect our virtual locomotion approach. We performed the same tests before, during, and after the experiments.

We modulated the mapping between movements in the real and the virtual environment by changing the gains gT[w],gR[u] and gC[w] of the HLT (see Section 3.1).

We evaluated how these parameters can be modified without the user noticing any changes. We altered the sequence and gains for each subject in order to reduce any falsification caused by learning effects. After a training, period we used random series starting with different amounts of discrepancy between the real and virtual world. In a simple up-staircase design we slightly increased the difference each 5 to 20 seconds randomly until subjects reported visual-proprioceptive discrepancy-this meant that the perceptual threshold had been reached.

Afterwards we performed further tests in order to verify the values derived from the study. All variables were logged and comments were recorded in order to reveal how much discrepancy between the virtual and real world can occur without users noticing. The amount of difference is evaluated on a four-point Likert scale where (0) means no distortion, (1) means a slight, (2) a noticeable and (3) a strong perception of the discrepancy.

Figure 3. (a) Real setup (b) Virtual scene Illustration of a user's path during the experiment showing (a) path through the real setup and (b) virtual path through the VE and positions at different points in time t0,...,t4 .

The results of the experiment allow us to derive appropriate parameterizations for general redirected walking. Figure 4 shows the applied gains and the corresponding level of perceived discrepancy as described above pooled for all subjects. The horizontal lines show the threshold that we defined for each subtask in order to ensure the maximal rate of detected manipulations.

Figure 4. Evaluation of the generic redirected walking concepts for (a) rotation gains gR[u], (b) translation gains gT[w] and (c) curvature gains gC[w]. Different levels of perceived discrepancy are accumulated. The bars indicate how much users have perceived the manipulated walks. The EU, AU and NU groups are combined in the diagrams. The horizontal lines indicate the detection thresholds as described in Section 4.3 and [ SBJ08 ].

![Evaluation of the generic redirected walking concepts for (a) rotation gains gR[u], (b) translation gains gT[w] and (c) curvature gains gC[w]. Different levels of perceived discrepancy are accumulated. The bars indicate how much users have perceived the manipulated walks. The EU, AU and NU groups are combined in the diagrams. The horizontal lines indicate the detection thresholds as described in Section 4.3 and .](figure04.jpg)

We tested 147 different rotation gains within-subjects. Figure 4(a) shows the corresponding factors applied to a 90° rotation. The bars show the amount as well as how strong turns were perceived as manipulated. The degree of perception has been classified into not perceivable, slightly perceivable, noticeable and strongly perceivable. It points out that when we scaled a 90° rotation down to 80°, which corresponds to a gain gR[u] = 0.88, none of the participants realized the compression. Even with a compression factor gR[u] = 0.77 subjects rarely (11%) recognized the discrepancy between the physical rotations and the corresponding camera rotations. If this factor is applied users are forced to physically rotate almost 30° more when they perform a 90° virtual rotation.

The subjects adapted to rotation gains very quickly and they perceived them as correctly mapped rotations. We performed a virtual blindfolded turn test. The subjects were asked to turn 135° in the virtual environment, where a rotation gain gR[u] = 0.7 had been applied, i. e., subjects had to turn physically about 190° in order to achieve the required virtual rotation. Afterwards, they were asked to turn back to the initial position. When only a black image was displayed the participants rotated on average 148° back. This is a clear indication that the users sensed the compressed rotations close to a real 135° rotation and hence adapted well to the applied gain.

We tested 216 distances to which different translation gains were applied (see Figure 4(b)). As mentioned in Section 1, users tend to underestimate distances in VEs. Consequently subjects underestimated the walking speed when a gain below 1.2 was applied. On the opposite, when gT[w] > 1.6 subjects recognized the scaled movements immediately. Between these thresholds some subjects overestimated the walking speed whereas others underestimated it. However, most subjects stated the usage of such a gain only as slight or noticeable. In particular, the more users tended to careen (i.e., sway side to side), the less they realized the application of a translation gain. This may be due to the fact that when they move the head left or right the gain also applies to corresponding motion parallax phenomena. This could also be due to the fact that vestibular stimulation suppresses vection [ LT77 ]. This careening may result in users adapting more easily to the scaled motions. One could exploit this effect during the application of translation gain when corresponding tracking events indicate a careening user.

We also performed a virtual blindfolded walk test. The subjects were asked to walk 3m in the VE where translation gains between 0.7 and 1.4 were applied. Afterwards, they were asked to turn, review the walked distance and walk back to the initial position, while only a blank screen had been displayed. Without any gains applied to the motion users walked back on average 2.7m. For each translation gain the participants walked too short which is a well-known effect because of the described underestimation of distances but also due to safety reasons, after each step participants are less oriented and thus tend to walk shorter so that they do not collide with any objects. However, on average they walked 2.2m for a translation gain gT[w] = 1.4, 2.5m for gT[w] = 1.3, 2.6m for gT[w] = 1.2, and 2.7m for gT[w] = 0.8 as well as for gT[w] = 0.9. When the gain satisfied 0.8 < gT[w] < 1.2 users walked 2.7m back on average. Thus, it seems participants adapted to the translation gains.

In total we tested 165 distances to which we applied different curvature gains as illustrated in Figure 4(c). When gC[w] satisfies 0 < gC[w] < 0.17 the curvature was not recognized by the subjects. Hence after 3m we were able to redirect subjects up to 15° left or right while they were walking on a segment of a circle with radius of approximately 6m. As long as gC[w] < 0.33, only 12% of the subjects perceived the difference between real and virtual paths. Furthermore, we noticed that the more slowly participants walk the less they observed that they walked on a curve instead of a straight line. When they increased the speed they began to careen and realized the bending of the walked distance. This might be exploited by adjusting the curvature gain with respect to the walking speed. Further study could explore walking speed.

One subject recognized each time a curvature gain had been applied. Indeed the user claimed a manipulation even when no gain was used, but he identified each bending to the right as well as to the left immediately. Therefore, we did not consider this subject in the analyses. The spatial cognition pre-tests showed that this user is ambidextrous in terms of hands as well as feet. However, the results for the evaluation of translation as well as rotation gains show that his results in these cases fit into the findings of the other participants.

In this section we describe implications for the design of a virtual locomotion interface with respect to the results obtained from the user study described in Section 4 . In order to verify these findings we have conducted further tests described in [ SBJ08 ]. For typical VE setups we want to ensure that only reasonable situations where users have to be manipulated enormously, e. g., to avoid a collision, cause the user to perceive a manipulation.

Based on the results from Section 4 and [ SBJ08 ] we formulate some guidelines in order to allow sufficient redirection. These guidelines shall ensure that with respect to the experiment the appliance of redirected walking is perceived in less than 20% of all walks.

Indeed, perception is a subjective matter, but with these guidelines only a reasonably small number of walks from different users is perceived as manipulated.

Figure 5. Redirection technique: (a) a user in the virtual world approaches a virtual wall such that (b) she is guided to the corresponding proxy prop, i. e., a real wall in the physical space.

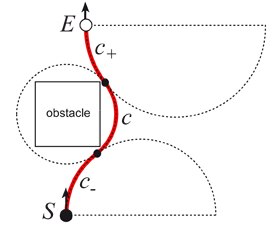

In this section we present how the redirection techniques described in Section 3 can be implemented such that users are guided to particular locations in the physical space, e g., proxy props, in order to support passive haptic feedback or to avoid a collision. We explain how a virtual path along which a user walks in the IVE is transformed to a path on which the user actually walks in the real world (see Figure 5 and 6).

Before a user can be redirected to a proxy prop supporting passive haptic feedback, the target in the virtual world which is represented by the prop has to be predicted. In most redirection techniques [ NHS04, RKW01, Su07 ] only the walking direction is considered for the prediction procedure.

Our approach also takes into account the viewing direction. The current direction of walk determines the predicted path, and the viewing direction is used for verification: if both vector's projections to the walking plane differ by more than 45°, no prediction is made. Hence the user is only redirected in order to avoid a collision in the physical space or when she might leave the tracking area. In order to prevent collisions in the physical space only the walking direction has to be considered because the user does not see the physical space due to the HMD.

When the angle between the vectors projected onto the walking plane is sufficiently small (< 45°), the walkingdirection defines the predicted path. In this case a half-line s+ extending from the current position S in the walking direction (see Figure 6) is tested for intersections with virtual objects in the user's frustum. These objects are defined in terms of their position, orientation and size in a corresponding scene description file (see Section 5). The collision detection is realized by means of a ray shooting similar to the approaches described by Pelligrini [ Pel97 ]. For simplicity we consider only the first object hit by the walking direction w. We approximate each virtual object that provides passive feedback by a 2D bounding box. Since these boxes are stored in a quadtree-like data structure the intersection test can be performed in real-time (see Section 5).

As illustrated in Figure 5(a) if an intersection is detected, we store the target object, the intersection angle αvirtual , the distance to the intersection point dvirtual , and the relative position of the intersection point Pvirtual on the edge of the bounding box. From these values we can calculate all data required for the path transformation process as described in the following section.

In robotics, techniques for autonomous robots have been developed to compute a path through several interpolation points [ NHS04, GNRH05 ]. However, these techniques are optimized for static environments. Highly-dynamic scenes, where an update of the transformed path occurs 30 times per second, are not considered [ Su07 ]. Since the scene-based description file contains the initial orientation between virtual objects and proxy props, it is possible to redirect a user to the desired proxy prop such that the haptic feedback is consistent with her visual perception. Fast computer-memory access and simple calculations enable consistent passive feedback.

As mentioned above, we predict the intersection angle αvirtual , the distance to the intersection point dvirtual , and the relative position of the intersection point Pvirtual on the edge of the bounding box of the virtual object. These values define the target pose E, i. e., position and orientation in the physical world with respect to the associated proxy prop (see Figure 6) The main goal of redirected walking is to guide the user along a real world path (from S to E) which varies as little as possible from the visually perceived path, i. e., ideally a straight line in the physical world from the current position to the predicted target location. The real world path is determined by the parameters αreal , dreal and Preal . These parameters are calculated from the corresponding parameters αvirtual , dvirtual and Pvirtual in such a way that consistent haptic feedback is ensured.

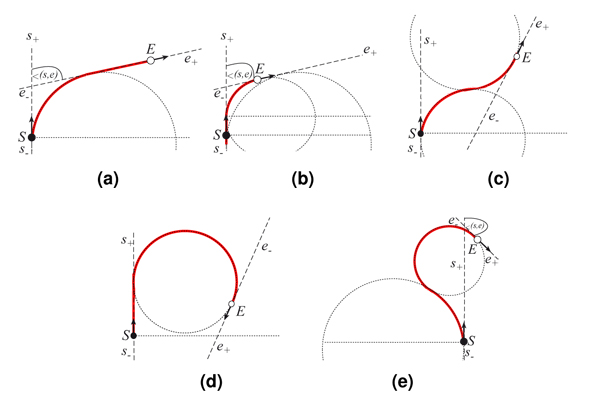

Due to many tracking events per second the start and end points change during a walk, but smooth paths are guaranteed by our approach. We ensure a smooth path by constraining the path parameters such that the path is C1 continuous, starting at the start pose S, and ending at the end pose S. A C1 continuous composition of line segments and circular arcs is determined from the corresponding path parameters for the physical path, i. e. αreal , dreal and Preal (see Figure 5(b)). The trajectories in the real world can be computed as illustrated in Figure 6, considering the start pose S together with the line s through S parallel to the direction of walk, and the end pose E together with the line e through E parallel to the direction of walk. With s+ respectively e+ we denote the half-line of s respectively e extending from S respectively E in the direction of walk, and with s- respectively e- the other half-line of s respectively e.

In Figure 6 different situations are illustrated that may occur for the orientation between S and E. For instance, if s+ intersects e- and the intersection angle satisfies 0 < ∠(s,e) < π/2 as depicted in Figure 6(a) and (b), the path on which we guide the user from S to E is composed of a line segment and a circular arc. The center of the circle is located on the line through S and orthogonal to s, its radius is chosen in such a way that e is tangent to the circle. Depending on whether e+ or e- touches the circle, the user is guided on a line segment first and then on a circular arc or vice versa. If s+ does not intersect e- two different cases are considered: e- intersects s- or not. If an intersection occurs the path is composed of two circular arcs that are constrained to have tangents s and e and to intersect in one point as illustrated in Figure 6(c). If no intersection occurs (see Figure 6(d)) the path is composed of a line segment and a circular arc similar to Figure 6(a). However, if the radius of one of the circles gets too small, i. e., the curvature gets too large, an additional circular arc is inserted into the path as illustrated in Figure 6(e). All other cases can be derived by symmetrical arrangements or by compositions of the described cases.

Figure 5 shows how a path is transformed using the described approaches in order to guide the user to the predicted target proxy prop, i. e., a physical wall. In Figure 5(a) an IVE is illustrated. Assuming that the angle between the projections of the viewing direction and direction of walking onto the walking plane is sufficiently small (see Section 5.4), the desired target location in the IVE is determined as described in Section 5.4. The target location is denoted by point Pvirtual at the bottom wall. Moreover, the intersection angle αvirtual as well as the distance dvirtual to Pvirtual are calculated. The registration of each virtual object to a physical proxy prop allows the system to determine the corresponding values Preal , αvirtual and dreal , and thus to derive start and end pose S and E are derived. A corresponding path as illustrated in Figure 5 is composed like the paths shown in Figure 6 .

When guiding a user through the real world, collisions with the physical setup have to be prevented. Collisions in the real world are predicted, similarly to those in the virtual world, based on the intersection of the direction of walk and real objects. A ray is cast in the direction of walk and tested for intersection with real world objects represented in the scene description file (see Section 5.6 ). If such a collision is predicted a reasonable bypass around an obstacle is determined as illustrated in Figure 7. The previous path between S and E is replaced by a chain of three circular arcs: a segment c of a circle which encloses the entire bounding box of the obstacle, and two additional circular arcs c+ and c- . The circles corresponding to these two segments are constrained to touch the circle around the obstacle. Hence, both circles may have different radii (as illustrated in Figure 7). Circular arc c is bounded by the two touching points, c- is bounded by one of the touching points and S and c+ by the other touching point and E.

In the previous sections we have described how a real-world path can be generated such that a user is guided to a registered proxy prop and unintended collisions in the real world are avoided. Actually, it is possible to represent a virtual path by many different physical paths. In order to select the best transformed path we define a score function for each considered path. The score function expresses the quality of paths in terms of matching visual and vestibular/proprioceptive cues or differences/discrepancies of the real and virtual worlds.

with the length of the virtual path dvirtual > 0 and the length of the transformed real path dreal > 0. Case differentiation is done in order to weight up- and downscaling equivalently. Furthermore we define the terms

where maxCurvature denotes the maximal and avgCurvature denotes the average curvature of the entire physical path. The constants c1 , c2 and c3 can be used to weight the terms in order to adjust the terms to the user's sensitivity. For example, if a user is susceptible to curvatures, c1 and c2 can be increased in order to give the corresponding terms more weight. In our setup we use c1 = c2 = 0.4 and c3 = 0.2 incorporating that users are more sensitive to curvature gains that to scaling of distances.

We specify the score function as

(1)

(1)

This function satisfies 0 ≤ score ≤ 1 for all paths. If score = 1 for a transformed path, the predicted virtual path and the transformed path are equal. With increasing differences between virtual and transformed path, the score function decreases and approaches zero. In our experiments most paths generated as described above achieve scores between 0.4 and 0.9 with an average score of 0.74. Rotation gains are not considered in the score function since when the user turns the head no path needs to be transformed in order to guide a user to a proxy prop.

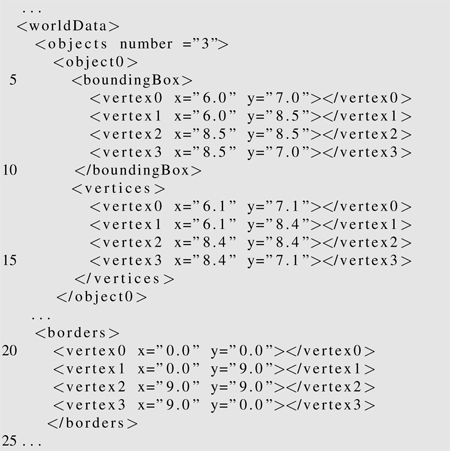

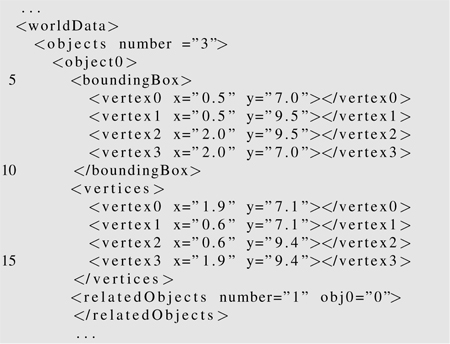

In order to register proxy props with virtual objects we represent the virtual and the physical world by means of an XML-based description in which all objects are discretized by a polyhedral representation, e. g., 2D bounding boxes. We use a two-dimensional representation, i. e., X and Y components only, in order to decrease computational effort. Furthermore, in most cases a 2D representation is sufficient, e. g., to avoid collision with physical obstacles. The degree of approximation is defined by the level of discretization set by the developer. Each real and virtual object is represented by line segments representing the edges of their bounding boxes. As mentioned in Section 2 the position, orientation and size of a proxy prop need not match these characteristics exactly. For most scenarios a certain deviation is not noticeable by the user when she touches proxy props, and both worlds are perceived as congruent. If tracked proxy props or registered virtual objects are moved within the working space or the virtual world, respectively, changes of their poses are updated in our XML-based description. Thus, dynamic scenarios where the virtual and the physical environment may change are considered in our approach.

Listing 1 shows part of an XML-based description specifying a virtual world. In lines 5-10 the bounding box of a real world object is defined. In lines 11-15 the vertices of the object are defined in real world coordinates. The bounding box provides an additional security area around the real world object such that collisions are avoided. The borders of the entire tracking space are defined by means of a rectangular area in lines 19-24.

Listing 2 shows part of an XML-based description of a working space. In lines 5-10 the bounding box of a virtual world object is defined. The registration between this object and proxy props is defined in line 17. The field relatedObjects specifies the number as well as the objects which serve as proxy props.

In this paper, we analyzed the users' sensitivity to redirected walking manipulations in several experiments. We introduced a taxonomy of redirection techniques and tested the corresponding gains in a practical useful range for their perceptibility. The results of the conducted experiments show that users can be turned physically about 41% more or 10% less than the perceived virtual rotation without noticing the difference.

Our results agree with previous findings [ JPSW08 ] that users are more sensitive to scene motion if the scene moves against head rotation than if the scene moves with head rotation. Walked distances can be up and down scaled by 22%. When applying curvature gains users can be redirected such that they unknowingly walk on an arc of a circle when the radius is greater or equal to 15m. Certainly, sensitivity to redirected walking is a subjective matter, but the results have potential to serve as guidelines for the development of future locomotion interfaces.

We have performed further questionnaires in order to determine the users' fear of colliding with physical objects. The subjects revealed their level of fear on a four point Likert-scale (0 corresponds to no fear, 4 corresponds to a high level of fear). On average the evaluation approximates 0.6 which shows that the subjects felt safe even though they were wearing a HMD and knew that they were being manipulated. Further post-questionnaires based on a comparable Likert-scale show that the subjects only had marginal positional and orientational indicationsdue to environmental audio (0.6), visible (0.1) or haptic (1.6) cues. We measured simulator sickness by means of Kennedy's Simulator Sickness Questionnaire (SSQ). The Pre-SSQ score averages for all subjects to 16.3 and the Post-SSQ score to 36.0. For subjects with high Post-SSQ scores, we conducted a follow-up test on another day to identify whether the sickness was caused by the applied redirected walking manipulations. In this test the subjects were allowed to walk in the same IVE for a longer period of time while this time no manipulations were applied. Each subject who was susceptible to cybersickness in the main experiment, showed the same symptoms again after approximately 15 minutes. Although cybersickness is an important concern, the follow-up tests suggest redirected walking does not seem to be a large contributing factor of cybersickness.

In the future we will consider other redirection techniques presented in the taxonomy of Section 1, which have not been analyzed in the scope of this paper. Moreover, further conditions have to be taken into account and tested for their influence on redirected walking, for example, distant scenes, level of detail, contrast, etc. Informal tests have motivated that manipulations can be intensified in some cases, e. g., when less objects are close to the camera, which could provide further motions cues while the user walks.

Currently, the tested setup consists of a cuboid-shaped tracked working space (10m x 7m x 2.5m) and a real table serving as proxy prop for virtual blocks, tables etc. With increasing number of virtual objects and proxy props more rigorous redirection concepts have to be applied, and users tend to recognize the inconsistencies more often. However, first experiments in this setup show that it becomes possible to explore arbitrary IVEs by real walking, while consistent passive haptic feedback is provided. Users can navigate within arbitrarily sized IVEs by remaining in a comparably small physical space, where virtual objects can be touched. Indeed, unpredicted changes of the user's motion may result in strongly curved paths, and the user will recognize this. Moreover, significant inconsistencies between vision and proprioception may cause cyber sickness [ BKH97 ].

We believe that redirected walking combined with passive haptic feedback is a promising solution to make exploration of IVEs more ubiquitously available. E.g., Google Earth or multi-player online games might be more useable if they were integrated into IVEs with real walking capabilities. One drawback of our approach is that proxy props have to be associated manually to their virtual counterparts. This information could be derived from the virtual scene description automatically. When the HMD is equipped with a camera, computer vision techniques could be applied in order to extract information about the IVE and the real world automatically. Furthermore, we would like to evaluate how much visual representation and passive haptic feedback of proxy props may differ with users noticing.

[Ber00] The Brain's Sense of Movement, Harvard University Press, Cambridge, Massachusetts, 2000, isbn 0-674-80109-1.

[BIL00] Perception of two-dimensional, simulated ego-motion trajectories from optic flow, Vis. Res., 2000, no. 21, 2951—2971, issn 0042-6989.

[BKH97] Travel in Immersive Virtual Environments: An Evaluation of Viewpoint Motion Control Techniques, Proceedings of the 1997 Virtual Reality Annual International Symposium (VRAIS'97), , pp. 45—52, IEEE Press, 1997, isbn 0-8186-7843-7.

[BRP05] The Hand is Slower than the Eye: A Quantitative Exploration of Visual Dominance over Proprioception, Proceedings of IEEE Virtual Reality Conference, pp. 3—10, IEEE, 2005, isbn 0-7803-8929-8.

[BS02] Virtual Locomotion System for Large-Scale Virtual Environment, Proceedings of IEEE Virtual Reality Conference, pp. 291—292 IEEE, 2002, isbn 0-7695-1492-8.

[BSD05] The perception of walking speed in a virtual environment, Presence, (2005), no. 4, 394—406, issn 1054-7460.

[BSH02] A New Step-in-Place Locomotion Interface for Virtual Environment with Large Display System, ACM SIGGRAPH 2002 conference abstracts and applications, p. 63, ACM Press, 2002, isbn 1-58113-525-4.

[BvdHV94] A theory of visual stability across saccadic eye movements, Behav. Brain Sci., (1994), no. 2, 247—292, issn 0140-525X.

[CD05] Haptic sensing technologies for a novel design methodology in micro/nanotechnology, Journal of Nanotechnology Perceptions, (2005), 89—98, issn 1660-6795.

[DB78] Visual vestibular interaction: Effects on self-motion perception and postural control, Perception. Handbook of Sensory Physiology, , pp. 755—804, R. Held, H. W. Leibowitz and H. L. Teuber (Eds.), Springer, Berlin, Heidelberg, New York, 1978, isbn 3-540-08300-6.

[FLKB07] Estimation of travel distance from visual motion in virtual environments, ACM Transaction on Applied Perception, 4 (2007) , 419—428, issn 1544-3558.

[FWW08] LLCM-WIP: Low-Latency, Continuous-Motion Walking-in-Place, Proceedings of IEEE Symposium on 3D User Interfaces 2008, pp. 97—104, IEEE Press, 2008, isbn 978-1-4244-2047-6.

[GNRH05] Telepresence Techniques for Controlling Avatar Motion in First Person Games, Intelligent Technologies for Interactive Entertainment (INTETAIN 2005), pp. 44—53, 2005, isbn 978-3-540-30509-5.

[IAR06] Distance Perception in Immersive Virtual Environments, Revisited, Proceedings of IEEE Conference on Virtual Reality, pp. 3—10, IEEE Press, 2006, issn 1087-8270.

[IHT06] Powered Shoes, SIGGRAPH 2006 International Conference on Computer Graphics and Interactive Techniques, Emerging Technologies, no. 28, ACM, 2006, isbn 1-59593-364-6.

[IKP07] Elucidating the Factors that can Facilitate Veridical Spatial Perception in Immersive Virtual Environments, Processings of the IEEE Virtual Reality Conference, pp. 11—18, IEEE, 2007, isbn 1-4244-0906-3.

[IMWB01] Passive Haptics Significantly Enhances Virtual Environments, Proceedings of 4th Annual Presence Workshop, 2001.

[Ins01] Passive Haptics Significantly Enhances Virtual Environments, Department of Computer Science, University of North Carolina at Chapel Hill, TR01-010, 2001.

[IRA07] Seven League Boots: A New Metaphor for Augmented Locomotion through Moderately Large Scale Immersive Virtual Environments, Proceedings of IEEE Symposium on 3D User Interfaces, pp. 167—170, IEEE Press, 2007, isbn 1-4244-0907-1.

[IYFN05] CirculaFloor IEEE Computer Graphics and Applications, (2005), no. 1, 64—67, issn 0272-1716.

[JPSW08] Sensitivity to Scene Motion for Phases of Head Yaws, Proceedings of 5th ACM Symposium on Applied Perception in Graphics and Visualization, 155—162, ACM Press, 2008, isbn 978-1-59593-981-4.

[KBMF05] Combining Passive Haptics with Redirected Walking, Proceedings of Conference on Augmented Tele-Existence, International Conference Proceeding Series, pp. 253—254, ACM Press, , 2005, isbn 0-473-10657-4.

[LBvdB99] Perception of self-motion from visual flow, Trends. Cogn. Sci., (1999), no. 9, 329—336, issn 1364-6613.

[Lin99] Bimanual Interaction, Passive-Haptic Feedback, 3D Widget Representation, and Simulated Surface Constraints for Interaction in Immersive Virtual Environments, The George Washington University, Department of EE & CS, 1999.

[LK03] Visual perception of egocentric distance in real and virtual environments, Virtual and adaptive environments, N. J. Mahwah, L. J. Hettinger, and M. W. Haas (Eds.), Mahwah, Lawrence Erlbaum Associates, Virtual and adaptive environments, 2003, isbn 0-8058-3107-X.

[LT77] Visual-Vestibular Interaction: Vestibular Stimulation Suppresses the Visual Induction of Illusory Self-rotation, Aviation, Space and Environmental Medicine, (1977), 248—253.

[NHS04] Motion Compression for Telepresent Walking in Large Target Environments, Presence, (2004), no. 1, 44—60, issn 1054-7460.

[Pel97] Ray Shooting and Lines in Space, Handbook of discrete and computational geometry, Chapman & Hall, pp. 599—614, 1997, isbn 0-8493-8524-5.

[PWF08] Evaluation of Reorientation Techniques for Walking in Large Virtual Environments, Proceedings of the IEEE Virtual Reality Conference, pp. 121—127, IEEE Press, 2008, isbn 978-1-4244-1971-5.

[Raz05] Redirected Walking, University of North Carolina, Chapel Hill, 2005.

[RKW01] Redirected Walking, Proceedings of Eurographics, pp. 289—294, ACM Press, 2001, isbn 1-4244-0906-3.

[RW07] Can People not Tell Left from Right in VR? Point-to-Origin Studies Revealed Qualitative Errors in Visual Path Integration, Proceedings of Virtual Reality, pp. 3—10, IEEE, 2007.

[SBJ08] Analyses of Human Sensitivity to Redirected Walking, 15th ACM Symposium on Virtual Reality Software and Technology, pp. 149—156, 2008.

[SBRK08] Moving Towards Generally Applicable Redirected Walking, Proceedings of the Virtual Reality International Conference (VRIC), pp. 15—24, 2008.

[STU07] Cyberwalk: Implementation of a Ball Bearing Platform for Humans, Proceedings of HCI, Lecture Notes in Computer Science, pp. 926—935, , 2007, isbn 978-3-540-73106-1.

[Su07] Motion Compression for Telepresence Locomotion, Presence: Teleoperator in Virtual Environments, (2007), no. 16, 385—398, issn 1054-7460.

[UAW99] Walking > Walking-in-Place > Flying, in Virtual Environments, Proceedings of 26th annual Conference on Computer Graphics and Interactive Techniques (SIGGRAPH), 359—364, ACM, 1999, isbn 0-201-48560-5.

[Wal87] Perceiving a Stable Environment When One Moves, Anual Review of Psychology, (1987), 1—27, issn 0066-4308.

[WCF05] Comparing VE Locomotion Interfaces, Proceedings of IEEE Virtual Reality Conference, pp. 123—130, IEEE Press, 2005, isbn 0-7803-8929-8.

[Wer94] Motion Perception during Self-Motion, The direct versus inferential controversy revisited, Behav. Brain Sci., (1994), no. 2, 293—355, issn 0140-525X.

[WNM06] Updating Orientation in Large Virtual Environments using Scaled Translational Gain, Proceedings of the 3rd ACM Symposium on Applied Perception in Graphics and Visualization, ACM Press, pp. 21—28, , 2006, isbn 1-59593-429-4.

Fulltext ¶

-

Volltext als PDF

(

Size

1.4 MB

)

Volltext als PDF

(

Size

1.4 MB

)

License ¶

Any party may pass on this Work by electronic means and make it available for download under the terms and conditions of the Digital Peer Publishing License. The text of the license may be accessed and retrieved at http://www.dipp.nrw.de/lizenzen/dppl/dppl/DPPL_v2_en_06-2004.html.

Recommended citation ¶

Frank Steinicke, Gerd Bruder, Klaus Hinrichs, Jason Jerald, Harald Frenz, and Markus Lappe, Real Walking through Virtual Environments by Redirection Techniques. JVRB - Journal of Virtual Reality and Broadcasting, 6(2009), no. 2. (urn:nbn:de:0009-6-17472)

Please provide the exact URL and date of your last visit when citing this article.