GRAPP 2006

A Survey of Image-based Relighting Techniques

extended and revised for JVRB

urn:nbn:de:0009-6-21208

Abstract

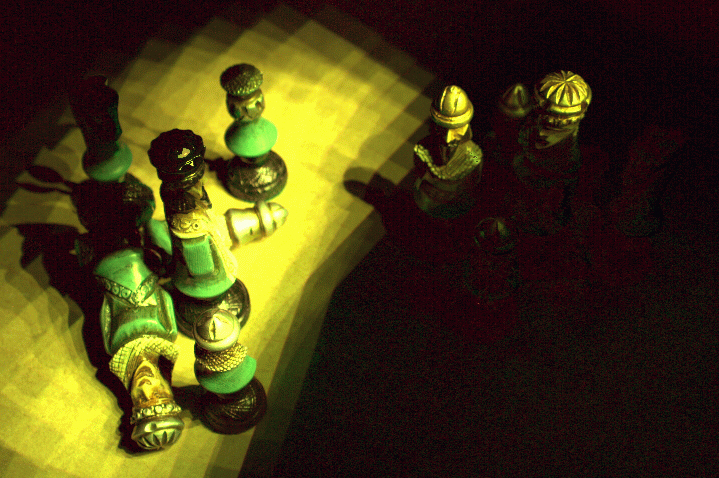

Image-based Relighting (IBRL) has recently attracted a lot of research interest for its ability to relight real objects or scenes, from novel illuminations captured in natural/synthetic environments. Complex lighting effects such as subsurface scattering, interreflection, shadowing, mesostructural self-occlusion, refraction and other relevant phenomena can be generated using IBRL. The main advantage of image-based graphics is that the rendering time is independent of scene complexity as the rendering is actually a process of manipulating image pixels, instead of simulating light transport. The goal of this paper is to provide a complete and systematic overview of the research in Imagebased Relighting. We observe that essentially all IBRL techniques can be broadly classified into three categories (Fig. 9), based on how the scene/illumination information is captured: Reflectance function-based, Basis function-based and Plenoptic function-based. We discuss the characteristics of each of these categories and their representative methods. We also discuss about the sampling density and types of light source(s), relevant issues of IBRL.

Keywords: Image-based Relighting, Survey, Image-based Techniques, Augmented Reality

Subjects: Computer Graphics

Image-based Modeling and Rendering (IBMR) synthesizes realistic images from pre-recorded images without a complex and long rendering process as in traditional geometry-based computer graphics. The major drawback of IBMR is its inherent rigidity. Most IBMR techniques assume a static illumination condition. Obviously, these assumptions cannot fully satisfy the computer graphics needs since illumination modification is a key operation in computer graphics. The ability to control illumination of the modeled scene enhances the three-dimensional illusion, which in turn improves viewers' understanding. If the illumination can be modified by relighting the images, instead of rendering the geometric models, the time for image synthesis will be independent of the scene complexity. This also saves the artist/designer enormous amount of time in fine tuning the illumination conditions to achieve realistic atmospheres. Applications range from global illumination and lighting design to augmented and mixed reality, where real and virtual objects are combined with consistent illumination.

Two major motivations for IBRL are :

-

Allows the user to vary illuminance of the whole (or only interesting portions of the) scene improving recognition and satisfaction.

-

Brings us a step closer to realizing the use of image-based entities as the basic rendering primitives/ entities.

For didactic purposes, we classify image-based relighting techniques into three categories, namely: Reflectance-based, Basis Function-based, Plenoptic Function-based. These categories should be actually viewed as a continuum rather than absolute discrete ones, since there are techniques that defy these strict categorizations.

Reflectance Function-based Relighting techniques explicitly estimate the reflectance function at each visible point of the object or scene. This is also known as the Anisotropic Reflection Model [ Kaj85 ] or the Bidirectional Surface Scattering Reflectance Distribution Function (BSSRDF) [ JMLH01 ]. It is defined as the ratio of the outgoing to the incoming radiance. Reflectance estimation can be achieved with calibrated light setup, which provide full control of the incident illumination. A reflectance function is modeled with the data of the scene, captured under varying illumination and view direction. Techniques then apply novel illumination and use the reflectance function calculated, for generating novel illumination effects in the scene.

Basis Function-based Relighting techniques take advantage of the linearity of the rendering operator with respect to illumination, for a fixed scene. Rerendering is accomplished via linear combination of a set of pre-rendered "basis" images. These techniques, for the purpose of computing a solution, determine a time-independent basis - a small number of "generative" global solutions that suffice to simulate the set of images under varying illumination and viewpoint.

Plenoptic Function-based Relighting techniques are based on the computational model, the Plenoptic Function [ AB91 ]. The original plenoptic function aggregates all the illumination and the scene changing factors in a single "time" parameter. So most research concentrates on view interpolation and leaves the time parameter untouched (illumination and scene static). The plenoptic function-based relighting techniques extract the illumination component from the aggregate time parameter, and facilitate relighting of scenes.

The remainder of the paper is organized as follows: Section 2, Section 3 and Section 4 respectively discuss each of the three relighting categories, along with their representative methods. In Section 5, we discuss some of the other relevant issues of relighting. We then provide some directions of future research in Section 6. Finally, we conclude with our final remarks in Section 7.

A reflectance function is the measurement of how

materials reflect light, or more specifically, how they

transform incident illumination into radiant illumination.

The Bidirectional Reflectance Distribution Function

(BRDF) [

NRH77

] is a general form of representing surface

reflectivity. A better representation is the Bidirectional

Surface Scattering Reflectance Distribution Function

(BSSRDF) [

JMLH01

], which model effects such as

color bleeding, translucency and diffusion of light

across shadow boundaries, otherwise impossible with

a BRDF model. As introduced by [

DHT00

], the

reflectance function R, an 8D function, determines the

light transfer between light entering a bounding volume

at a direction and position ψincident

and leaving at

ψexitant

:

R = R(ψincident,ψexitant)

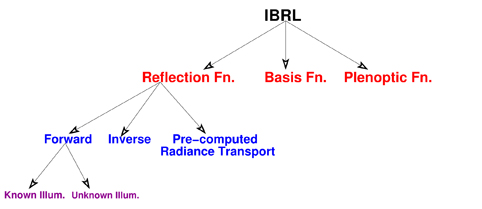

Figure 1. Mirrored balls, representing illumination of an environment, used for relighting faces [ DHT00 ]. Images cited from the website [1] with permission from Mr. Debevec.

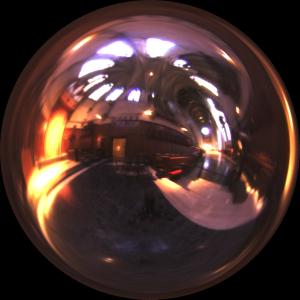

Figure 2. An arrangement of chess pieces, illuminated by different types of incident light fields [ MPDW04 ]. Images cited from the website [2] with permission from Mr. Dutré.

This calculated reflectance function can be used to

compute relit images of the objects, lit with novel

illumination. The computation for each relit pixel is

reduced to multiplying corresponding coefficients of the

reflectance function and the incident illumination.

Lexitant(ω) = ∫ΩRLincident(ω)dω

where, Ω is the space of all light directions over a hemisphere centered around the object to be illuminated (ω ∈ Ω). For every viewing direction, each pixel in an image stores its appearance under all illumination directions. Thus each pixel in an image is a sample of the reflectance function. The reflectance functions are sampled from real objects by illuminating the object from a set of directions while recording photographs from different viewpoints.

We classify the estimation of reflectance functions into three different categories: Forward, Inverse and Pre-computed radiance transport.

The forward methods of estimating reflectance functions sample these functions exhaustively and tabulate the results. For each incident illumination, they store the reflectance function weights for a fixed observed direction. The forward method of estimating reflectance functions can further be divided into two categories, on the basis of illumination information provided, Known and Unknown.

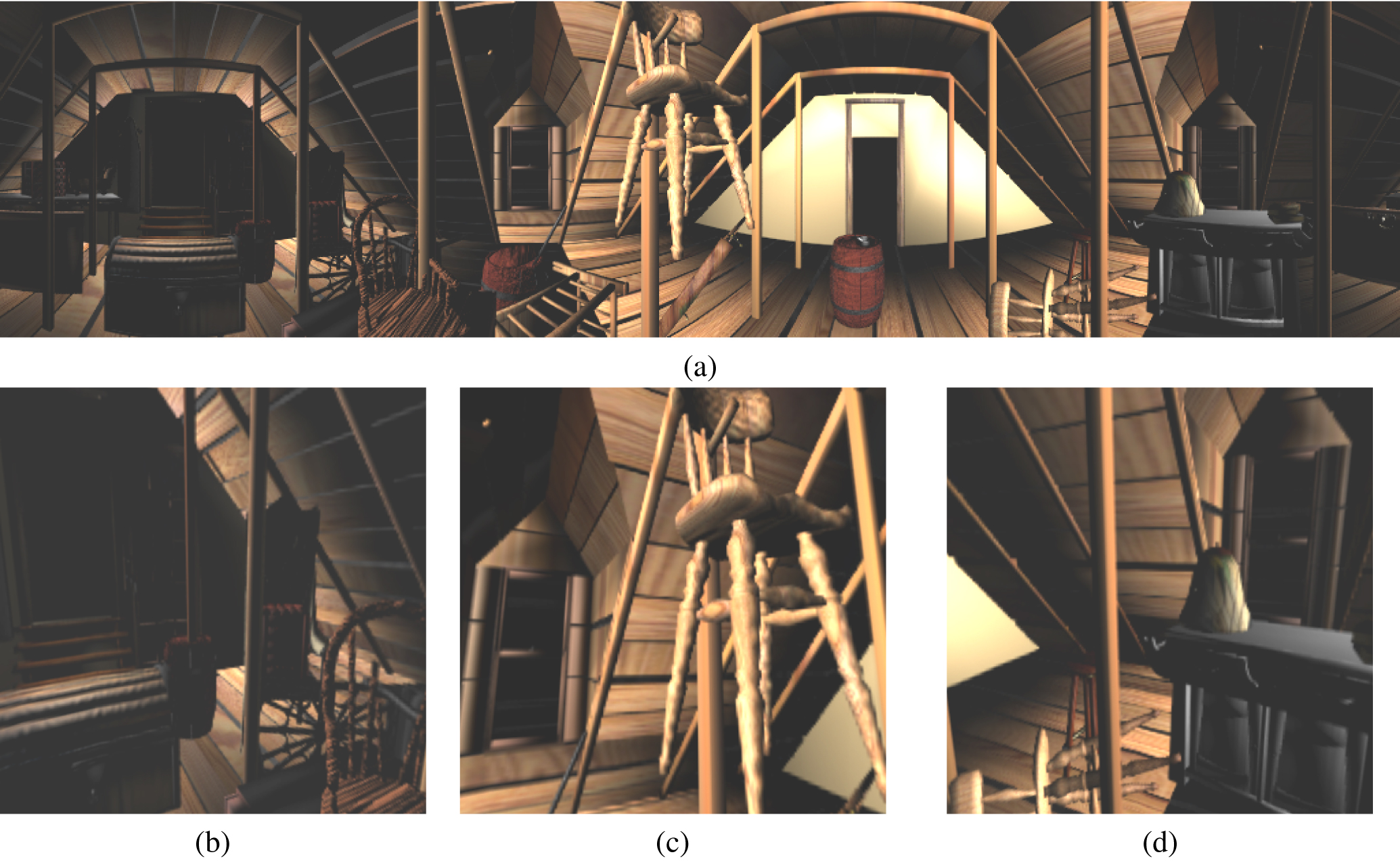

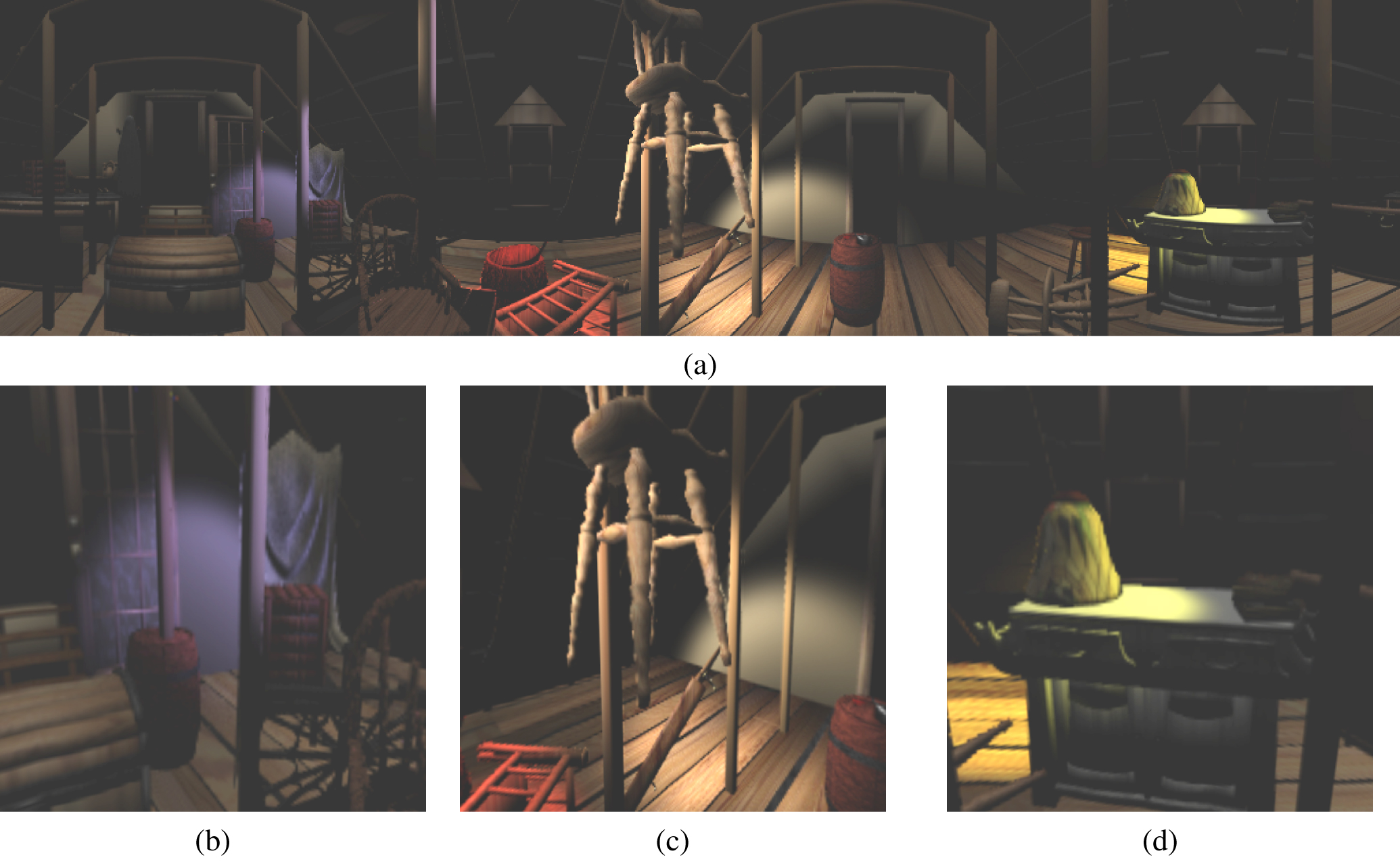

Figure 3. Attic relighting using conventional light sources(top) and 5 spot lights(bottom) [ WHF01 ]. Original image source: Tien-Tsin Wong, Pheng-Ann Heng, and Chi-Wing Fu, Interactive relighting of panoramas, IEEE Comput. Graph. Appl. 21(2001), no. 2, 32-41. ©2001 IEEE

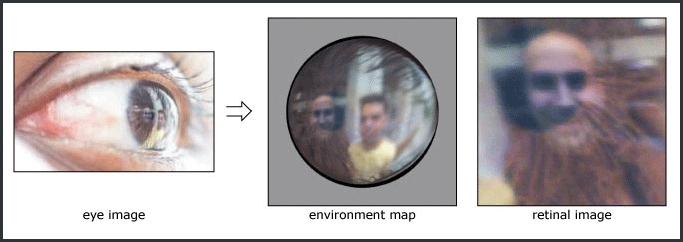

Figure 4. Using the mathematical framework of the imaging system of the eye, one can estimate the illumination information [ NN04 ]. Image cited from the website [3] with permission from Mr. Nayar.

The techniques with known illumination incorporate the information in their setup. The user is provided full control over the direction, position and type of incident illumination. This information is directly used for finding the reflectance properties of the scene. [ DHT00 ] use the highest resolution incident illumination with roughly 2000 directions and construct a reflectance function for each observed image pixel from its values over the space of illumination directions (Fig. 1).[ MPDW04 ] sample the reflectance functions from real objects by illuminating the object from a set of directions while recording the photographs. They reconstruct a smooth and continuous reflectance function, from the sampled reflectance functions, using the multilevel B-spline technique. [ MPDW04 ] exploit the richness in the angular and spatial variation of the incident illumination, and measure six-dimensional slices of the eight-dimensional reflectance field, for a fixed viewpoint (Fig. 2). On the other hand, [ MGW01 ] store the coefficients of a biquadratic polynomial for each texel, thereby improving upon the compactness of the representation, and uses it to reconstruct the surface color under varied illumination conditions. The reflectance functions and illumination are expressed by a set of common basis functions, enabling a significant speed-up in the relighting process. This method maintains all the high-frequency features such as highlights and self-shadowing. [ GLL04 ] captures the effects of translucency by illuminating individual surface points of a translucent object and measuring the impulse response of the object in each case.

Figure 5. Four relighting examples(top row) as linear combination of images, the coefficients being defined

by novel images of a probe object(bottom, left image) which are reconstructed with the sampled probe

images(right) [

FBS05

]. Original image source: Martin Fuchs, Volker Blanz, and Hans-Peter Seidel, Bayesian

relighting, Proceedings of the Eurographics Symposium on Rendering Techniques on July29th - July 1st, 2005,

Eurographics Association, 2005, ISBN 3-905673-23-1 , pp. 157-164. ©Eurographics Association 2005;

Reproduced by kind permission of the Eurographics Association

Figure 6. A transparent dragon and a torus digitally composed onto background images, preserving the illumination effects of refraction, reflection and colored filtering [ ZWCS99 ],[ CZH00 ]

[ WHON97,WHF01 ] propose a concept of apparent-BRDF to represent the outgoing radiance distribution passing through the pixel window on the image plane. By treating each image as an ordinary surface element, the radiance distribution of the pixel under various illumination conditions is recorded in a table (Fig. 3). [ KBMK01 ] samples the surfaces incident field to reconstruct a non-parametric apparent BRDF at each visible point on the surface.[ BG01 ] iteratively produces an approximation of the reflectance model of diffuse, specular, isotropic or anisotropic textured objects using a single image and the 3D geometric model of the scene.

Techniques with unknown incident illumination information estimate the information. [ NN04 ] use eyes of a human subject and compute a large field of view of the illumination distribution of the environment surrounding a person, using the characteristics of the imaging system formed by the cornea of an eye and a camera viewing it (Fig. 4). Their assumption of a human subject in the scene, at all times, may not be practical though.[ LKG03 ] used six steel spheres to recover the light source positions. They fit an average BRDF function to the different materials of the objects in the scene. Some other techniques [ FBS05, TSED04 ] indirectly compute the incident illumination information by using black snooker ball/nonmetallic sphere (Fig. 5). A very early work of IBRL, Inverse Rendering [ Mar98 ], solves for unknown lighting and reflectance properties of a scene, for relighting purposes.

The inverse problem of estimation of reflectance functions can be be stated as follows: Given an observation, what are the weights and parameters of the basis functions that best explain the observation?

Inverse methods observe an output and compute the probability that it came from a particular region in the incident illumination domain. The incident illumination is typically represented by a bounded region, such as an environment map, which is modeled as a sum of basis functions rectangular [ ZWCS99 ] or Gaussian kernels [ CZH00 ]. They capture an environment matte, which in addition to capturing the foreground object and its traditional matte, also describes how the object refracts and reflects light. This can then be placed in a new environment, where it will refract and reflect light from that scene (Fig. 6). Techniques [ MLP04, PD03 ] have been proposed which progressively refine the approximation of the reflectance function with an increasing number of samples.

For a more accurate reflectance estimation, [ MPZ02 ] combine a forward method [ DHT00 ] for the low-frequency surface reflectance function and an inverse method, environment matting [ CZH00 ], for the high-frequency surface reflectance function. This is used for capturing all the complex lighting effects, like high-frequency reflections and refractions.

A global transport simulator creates functions over the object's surface, representing transfer of arbitrary incident lighting, into transferred radiance which includes global effects like shadows, self-interreflections, occlusion and scattering effects. When the actual lighting condition is substituted at run-time, the resulting model provides global illumination effects.

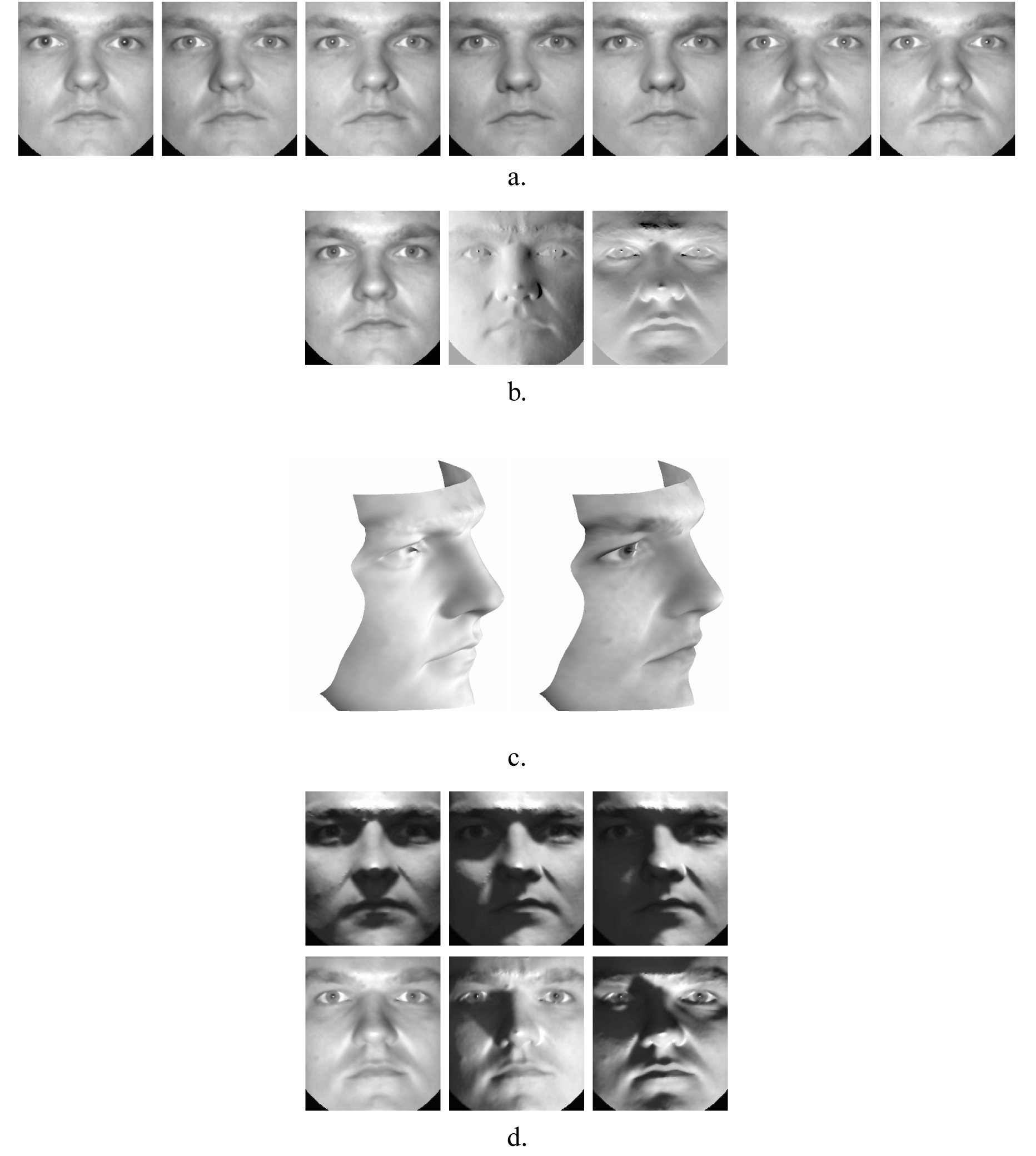

Figure 7. Illuminating faces using Illumination cones. First row (a): Input dataset, Second row (b): Basis Images, Third and Fourth row (d): Relit Faces [ GKB98, GBK01 ]. Original image source: Athinodoros S. Georghiades, Peter N. Belhumeur, and David J. Kriegman, From few to many: Illumination cone models for face recognition under variable lighting and pose, IEEE Trans. Pattern Anal. Mach. Intell. 23(2001), no. 6, 643-660. ©2001 IEEE

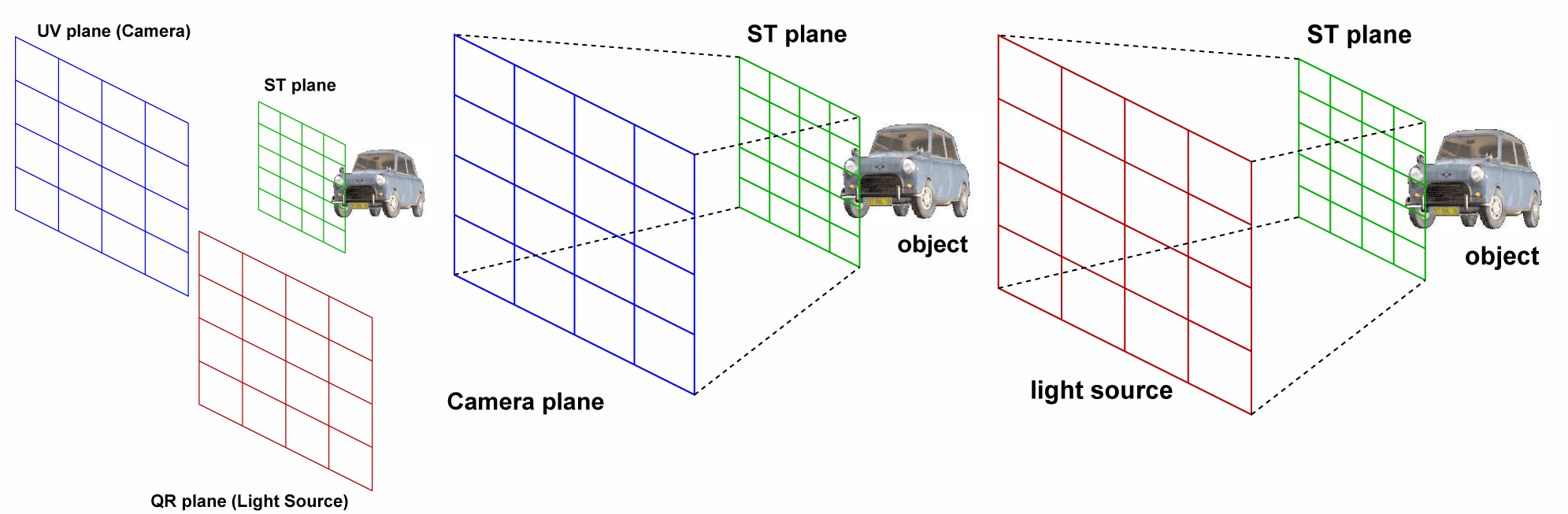

Figure 8. The tri-planar configuration and the dual light slab parametrization. Each captured value represents the radiance reflected through (s,t) and received at a certain (u,v) when the scene is illuminated by a point source positioned at (q,r) [ LWS02 ]

The radiance transport is pre-computed using a detailed model of the scene [ SKS02 ]. To improve upon the rendering performance, the incident illumination can be represented using spherical harmonics [ KSS02, RH01, SKS02 ] or wavelets [ NRH03 ]. The reflectance field, stored per vertex as a transfer matrix, can be compressed using PCA [ SHHS03 ] or wavelets [ NRH03 ].

[ NRH04 ] nrh04 focuses on relighting for changing illumination and viewpoint, while including all-frequency shadows, reflections and lighting. They propose a novel triple product integrals based technique of factorizing the visibility and the material properties. Recently, [ WTL05 ] presented a method of relighting translucent objects under all-frequency lighting. They apply a two-pass hierarchical technique for computing non-linearly approximated transport vectors due to diffuse multiple scattering.

Basis Function based techniques decompose the luminous intensity distributions into a series of basis functions, and illuminances are obtained by simply summing luminance from each light source whose luminous intensity distribution obey each basis function. Assuming multiple light sources, luminance at a certain point is obtained by calculating the luminance from each light source and summing them. In general, luminance calculation obeys the two following rules of superposition:

These techniques calculate luminance in the case of alterations in the luminous distributions and the direction of light sources. The luminous intensity distribution of a point light source is expressed as the sum of a series of basis distributions. Luminance due to light source, whose luminance intensity distribution corresponds to one of the basis distributions, is calculated in advance and stored as basis luminance. Using the aforementioned property 1, the luminance due to the light source with luminous intensity distribution, is calculated by summing the pre-calculated basis luminances corresponding to each individual basis distribution. Using property 2, the luminance due to a light source, whose luminous intensity distribution can be expressed as the weighted sum of the basis distributions, is obtained by multiplying each basis luminance with corresponding weights and summing them. Thus, once the basis luminance is calculated in the pre-process, the resulting luminance can be obtained quickly by calculating the weighted sum of the basis luminances. Some desirable properties of a basis set of illumination functions are:

We classify the type of basis functions (used in Relighting) into five categories and provide their corresponding representative methods:

-

Steerable Functions [ NSD94 ]: Under natural illuminants like the sun, whose direction varies along a path on the sphere, a desirable property of the basis is to be rotation invariant. A steerable function is one which can be written as a linear combination of rotated versions of itself.

-

Spherical Harmonics [ DKNY95 ]: Spherical harmonics is an orthonormal basis defined over a unit sphere. In the context of interior lighting design, spherical harmonics can express light sources from different directions. They are effective for recovering illumination that is well localized in the frequency domain.

-

Singular Value Decomposition/Principal Component Analysis [ GBK01 ] (Fig. 7 ), [ GKB98, OK01, HWT04 ]: These techniques are used for simplifying a dataset, or specifically reduce the dimensionality of a data set while retaining as much information as is possible. They compute a compact and optimal description of the data set.

-

N-mode SVD: Multilinear algebra of higherorder Tensors [ VT04, FKIS02, SVLD03, TZL02 ]: It is an extension to tensors, of the conventional matrix singular value decomposition. A major advantage of this model is that it is purely image-based, thereby avoiding the nontrivial problem of 3D geometric structure from the image data. Further, this formulation can disentangle and explicitly represent each of the multiple factors inherent to image formationillumination change, viewpoint change etc.

The appearance of the world can be thought of as the dense array of light rays filling the space, which can be observed by posing eyes or cameras in space. These light rays can be represented through the plenoptic function (from plenus, complete or full; and optics) [ AB91 ]. As pointed by Adelson and Bergen:

The world is made of three-dimensional objects, but these objects do not communicate their properties directly to an observer. Rather, the objects fill the space around them with the pattern of light rays that constitutes the plenoptic function, and the observer takes samples from this function. The plenoptic function serves as the sole communication link between the physical objects and their retinal images. It is the intermediary between the world and the eye.

All basic visual measurements can be considered to

characterize local change along one or more dimensions of

a single function that describes the structure of the

information in the light impinging on an observer. The

plenoptic function represents the intensity of the light rays

passing through the camera center at every location, at

every possible viewing angle. The plenoptic function is a

7D function that models a 3D dynamic environment

by recording the light rays at every space location

(Vx,Vy,Vz), from every possible direction (θ,φ), over

any range of wavelengths (λ) and at any time (t),

i.e.,

P = P7(Vx,Vy,Vz,θ,φ,λ,t)

An image of a scene with a pinhole camera records the light rays passing through the cameras center-ofprojection. They can also be considered as samples of the plenoptic function. Basically, the function tells us how the environment looks when our eye is positioned at V = (Vx,Vy,Vz). The time parameter t actually models all the other unmentioned factors such as the change of illumination and the scene.

Plenoptic Function-based relighting techniques propose new formulations of the plenoptic function, which explicitly specify the illumination component. Using these formulations, one can generate complex lighting effects. One can simulate various lighting configurations such as multiple light sources, light sources with different colors and also arbitrary types of light sources (Section 5.1).

[ WH04 ] wh04 discuss a new formulation of the plenoptic function, Plenoptic Illumination Function, which explicitly specifies the illumination component. They propose a local illumination model, which utilizes the rules of superposition for relighting under various lighting configurations. [ LWS02 ] on the other hand, propose a representation of the plenoptic function, the reflected irradiance field. The reflected irradiance field stores the reflection of surface irradiance as an illuminating point light source moves on a plane. With the reflected irradiance field, the relit object/scene can be synthesizedsimply by interpolating and superimposing appropriate sample reflections (Fig. 8).

In this section, we discuss some of the relevant issues involving IBRL. We also provide a brief comparison between all the categories of IBRL techniques.

Illumination is a complex and high-dimensional function of computer graphics. To reduce the dimensionality and to analyze their complexity and practicality, it is necessary to assume a specific type of light source. Two types of light sources most commonly used are:

-

Directional Light Source (DLS): A DLS emits parallel rays which do not diverge or become dimmer with distance. It is parametrized using only two variables (θ,φ), which denotes the direction of the light vector. For planar surfaces lighted by a DLS, the degree of shading will be the same right across the surface. The computations required for directional lights are therefore considerably less. Using a DLS is also more meaningful, because the captured pixel value in an image tells us what the surface elements behind the pixel window actually look like, when all surface elements are illuminated by parallel rays in the direction of the viewing point. DLS serves well with synthetic object/scene where it is used to approximate the light coming from an extremely distant light source. But it poses practical difficulties for capturing real and large object/ scene. They can be approximated with strong spotlights at a distance which greatly exceeds the size of the object/scene.

-

Point Light Source (PLS): A PLS shines uniformly in all directions. Its intensity decreases with the distance to the light source. A PLS is parametrized using three variables (Px,Py,Pz), which denote the 3D position of the PLS in space. As a result, the angle between the light source and the normals of the various affected surfaces can change dramatically from one surface to the next. In the presence of multiple light sources, this means that for every vertex, one has to determine the direction of the light vector corresponding to a light source. This requires determination of the depth map of the images using computer vision algorithms, which though provide good approximations, make the lighting calculations computationally intensive. Point light source are usually close to the observer and so more practical for real and large objects/scenes.

Sampling is one of the key issues of image-based graphics. It is a non-trivial problem because it involves the complex relationship among three elements: the depth and texture of the scene, the number of sample images, and the rendering solution. One needs to determine the minimum sampling rate for anti-aliased image-based rendering. Comparatively, very little research [ CCST00, SK00, ZC04, ZC01, ZC03 ] has gone into trying to tackle this problem.

In the context of IBRL, sampling deals with the illumination component for efficient and realistic relighting [ WH04 ]. [ LWS02 ] prove that there exists a geometry-independent bound of the sampling interval, which is analytically bound to the BRDF of the scene. It ensures that the intensity error in the relit image is smaller than a user-specified tolerance, thus eliminating noticeable artifacts.

We compare all the IBRL categories (Fig. 9 ) on the basis of the following factors:

-

Object and Image Space: Reflectance function-based relighting techniques are closest to the object space since they try to estimate a scene/object property, reflectance. The essence of these techniques lie in modeling the reflectance of the scene/object in a correct and efficient manner from the moderately sampled images of the same (and not in densely sampling it). The model of the reflectance if computed correctly, can reproduce very realistic illumination effects, but incorrect modeling can result in unacceptable artifacts. Since the scene/object needs to be only moderately sampled, hence the acquisition time for these techniques are usually less as compared to the two other IBRL categories. A major disadvantage of reflectance function-based relighting techniques is that not all illumination effects can be reproduced using a single model of the reflectance. So, a scene composed of objects with different reflectance cannot all be realistically relit. Once the compact reflectance model is computed, relighting can be performed in realtime. Some reflectance function-based techniques (precomputed radiance transport), unlike the other two IBRL categories, require geometry of the scene/object to estimate the reflectance of the same. Though these techniques produce results devoid of artifacts, the geometry of the object/ scene may not always be available. Plenoptic function-based relighting techniques are closest to the image space. They sample the scene under different illumination conditions and use these images for relighting. Since these techniques are entirely image-dependent techniques, to create interesting and complex illumination effects (specularity, highlights, shadows, refraction etc.), they rely heavily on sampling the object/ scene densely. Therefore in general, plenoptic function-based techniques have a huge dataset to reproduce all the desired illumination effects. Therefore, the acquisition (photographs of a real scene/object) or synthesis (images of an artificial scene/object) of the huge dataset is a long and tedious process. Processing of this huge dataset to create the desired illumination effects also demand huge computational burden and high memory requirements. The main advantage of plenoptic function-based techniques is that they are measurement-based, and so they produce very realistic relighting. While reflection-based relighting and plenoptic function-based relighting techniques are in general at the two extremes of object and image space respectively, basis function-based relighting techniques are a confluence of the two spaces. Basis function-based techniques sample the scene densely and then create a compact intermediate representation of the scene/object for further processing and relighting. Since these techniques also depend on the sampling, hence acquisition (or synthesis) of the dataset and computing the basis representation takes a considerable amount of time. Further, more importantly, processing the input images into their intermediate representation is dependent on the effectiveness and appropriateness of the basis function used and so is prone to errors creeping in and resulting in undesirable illumination effects. Relighting can be achieved in realtime due to the compact basis representation of the scene/object.

-

Frequency components: While both reflectance function and plenoptic function-based relighting techniques can capture both the high and low frequency components, basis function-based relighting techniques are usually capable of capturing only the low frequency effects. Forward reflectance function-based techniques capture the low frequency components and the inverse methods capture the high frequency components. Plenoptic function-based techniques on the other hand, sample the scene densely in the presence of high frequency components and so are able to realistically reproduce relighting.

-

Sampling density: For the purposes of IBRL, the sampling density of the corresponding techniques usually increases in the following order - reflectance function-based, basis function-based, plenoptic function-based. Therefore, the acquisition, processing and relighting time also increase in the same order.

-

Applications: Reflectance-based relighting techniques can be utilized to edit the surface properties of an object in a scene (for example, converting a diffuse object to specular) in addition to performing IBRL. The techniques of the other two categories are not capable of generating such effects. They mainly illuminate the scene with a novel illumination with or without change in viewpoint. So reflectance function-based IBRL could be efficiently utilized for applications that require editing both lighting configurations and material properties of the scene/objects in the scene.

A lot of research remains to be done in IBRL. Some ideas being:

-

Efficient Representation: BRDF function-based IBRL techniques require huge number of samples to accurately estimate a reflectance function. Most techniques, for practical purposes, consider low-frequency components, which compromises with the visual quality of the rendered image. Almost all basis function-based techniques also require a number of images for relighting. Thus, a lot of research is required to find accurate and efficient representations of a scene, which capture all the complex phenomenas of lighting and reflectance functions. Variability in terms of viewpoint and illumination leads to huge data sets, which further incurs huge computational costs. A related area which deserves considerable investigation, is IBRL for real and large environments.

-

Sampling: Most techniques do not deal with the minimum sampling density required for anti-aliased IBRL. [ LWS02 ] discuss about a geometry-independent sampling density based on radiometric tolerance. Though this serves our purpose of efficient sampling of certain scenes, what we need is a photometric tolerance, which takes into account the response function of human vision. [ DMZ05 ] discuss the importance of psychophysical quality scale for realistic IBRL of glossy surfaces.

-

Compression: No matter how much the storage and memory increase in the future, compression is always useful to keep the IBRL data at a manageable size. A high compression ratio in IBRL relies heavily on how good the images can be predicted. The sampled images for IBRL, usually have a strong inter-pixel and intra-pixel correlation, which needs to be harnessed for efficient compression. Currently, techniques such as spherical harmonics, vector quantization, direct cosine transform and spherical wavelets are used for compressing the datasets of IBRL, but all of these have their own inherent disadvantages.

-

Dynamics: Most IBRL techniques deal with static environments, in terms of change in geometry of the scene/object. With the development of high-end programmable graphics processors, it is conceivable that IBRL can be applied to dynamic environments.

We have surveyed all the recent developments in the field of Image-based Relighting and, in particular, classified them into three categories based on how they capture the scene/illumination information: Reflectance Function-based, Basis Function-based and Plenoptic Function-based. We have presented each of the categories along with their corresponding representative methods. Relevant issues of IBRL like type of light source and sampling have also been discussed.

It is interesting to note the trade-off between geometry and images, needed for anti-aliased image-based rendering. Efficient representation, realistic rendering, limitations of computer vision algorithms and computational costs should motivate researchers to invent efficient image-based relighting techniques in future.

We would like to thank the members of ViGIL, IIT Bombay for their continued help and support.

This research was supported by the fellowship fund endowed by Infosys Technologies Limited, India.

[AB91] The plenoptic function and the elements of early vision, Computational Models of Visual Processing, 1991, MIT Press, Cambridge, MA, pp. 3—20, isbn 0-262-12155-7.

[BG01] Image-based rendering of diffuse, specular and glossy surfaces from a single image , SIGGRAPH '01: Proceedings of the 28th annual conference on Computer graphics and interactive techniques, 2001, pp. 107—116, New York, NY, USA, ACM Press, isbn 1-58113-292-1.

[CCST00] Plenoptic sampling, SIGGRAPH '00: Proceedings of the 27th annual conference on Computer graphics and interactive techniques, 2000, pp. 307—318, ACM Press/Addison-Wesley Publishing Co., New York, NY, USA, isbn 1-58113-208-5.

[CZH00] Environment matting extensions: towards higher accuracy and real-time capture, SIGGRAPH '00: Proceedings of the 27th annual conference on Computer graphics and interactive techniques, 2000, pp. 121—130, ACM Press/Addison-Wesley Publishing Co., New York, NY, USA, isbn 1-58113-208-5.

[DHT00] Acquiring the reflectance field of a human face, SIGGRAPH '00: Proceedings of the 27th annual conference on Computer graphics and interactive techniques, 2000, pp. 145—156, ACM Press/Addison-Wesley Publishing Co., New York, NY, USA, isbn 1-58113-208-5.

[DKNY95] A quick rendering method using basis functions for interactive lighting design, Computer Graphics Forum, (1995), no. 3, 229—240, issn 0167-7055.

[DMZ05] A perceptual quality scale for image-based relighting of glossy surfaces, CW417, June 2005, Katholieke Universiteit Leuven.

[FBS05] Bayesian relighting, Proceedings of the Eurographics Symposium on Rendering Techniques on July 29th - July 1st, 2005, 2005, pp. 157—164, Eurographics Association, Konstanz, Germany, isbn 3-905673-23-1.

[FKIS02] Appearance based object modeling using texture database: acquisition, compression and rendering, EGRW '02: Proceedings of the 13th Eurographics workshop on Rendering (Aire-la-Ville, Switzerland), 2002, pp. 257—266, Eurographics Association, isbn 1-58113-534-3.

[GBK01] From few to many: Illumination cone models for face recognition under variable lighting and pose, IEEE Trans. Pattern Anal. Mach. Intell., (2001), no. 6, 643—660, issn 0162-8828.

[GKB98] Illumination cones for recognition under variable lighting: Faces, CVPR '98: Proceedings of the IEEE Computer Society Conference on Computer Vision and Pattern Recognition, 1998, pp. 52—58 IEEE Computer Society, Washington, DC, USA, issn 1063-6919.

[GLL04] Disco: acquisition of translucent objects, ACM Trans. Graph., (2004), no. 3, 835—844, issn 0730-0301.

[HWT04] Animatable facial reflectance fields, Proceedings of the 15th Eurographics Workshop on Rendering Techniques, 2004, pp. 309—321, isbn 3-905673-12-6.

[JMLH01] A practical model for subsurface light transport, SIGGRAPH '01: Proceedings of the 28th annual conference on Computer graphics and interactive techniques, 2001, pp. 511—518, ACM Press, New York, NY, USA, isbn 1-58113-292-1.

[Kaj85] Anisotropic reflection models, SIGGRAPH '85: Proceedings of the 12th annual conference on Computer graphics and interactive techniques, 1985, pp. 15—21, ACM Press, New York, NY, USA, issn 0097-8930.

[KBMK01] Image-based modeling and rendering of surfaces with arbitrary brdfs, In Proceedings of Computer Vision and Pattern Recognition CVPR'01, 2001, pp. 568—575, isbn 0-7695-1271-0.

[KSS02] Fast, arbitrary brdf shading for low-frequency lighting using spherical harmonics, EGRW '02: Proceedings of the 13th Eurographics workshop on Rendering (Aire-la-Ville, Switzerland), 2002, pp. 291—296, Eurographics Association, isbn 1-58113-534-3.

[LKG03] Image-based reconstruction of spatial appearance and geometric detail, ACM Trans. Graph., (2003), no. 2, 234—257, issn 0730-0301.

[LWS02] Relighting with the reflected irradiance field: Representation, sampling and reconstruction, Int. J. Comput. Vision, (2002), no. 2-3, 229—246, issn 0920-5691.

[Mar98] Inverse rendering for computer graphics, Cornell University, 1998, Adviser Donald P. Greenberg.

[MGW01] Polynomial texture maps, SIGGRAPH '01: Proceedings of the 28th annual conference on Computer graphics and interactive techniques, 2001, pp. 519—528 ACM Press, New York, NY, USA, isbn 1-58113-292-1.

[MLP04] Progressively-refined reflectance functions from natural illumination, Proceedings of the 15th Eurographics Workshop on Rendering Techniques, 2004, pp. 299—308, Eurographics Association, isbn 3-905673-12-6.

[MPDW03]

Smooth reconstruction and compact representation of reflectance functions for image-based relighting,

Proceedings of the 15th Eurographics Workshop on Rendering,

2004,

pp. 287—298,

Eurographics Association,

[MPZ02]

Acquisition and rendering of transparent and refractive objects,

EGRW '02: Proceedings of the 13th Eurographics workshop on Rendering (Aire-la-Ville, Switzerland),

2002,

pp. 267—278

Eurographics Association,

isbn 1-58113-534-3.

[NN04] Eyes for relighting, ACM Trans. Graph., (2004), no. 3, 704—711, issn 0730-0301.

[NRH77] Geometric considerations and nomenclature for reflectance, NBS Monograph 160, National Bureau of Standards (US), 1977.

[NRH03] All-frequency shadows using non-linear wavelet lighting approximation, ACM Trans. Graph., (2003), no. 3, 376—381, issn 0730-0301.

[NRH04] Triple product wavelet integrals for all-frequency relighting, ACM Trans. Graph., (2004), no 3, 477—487, issn 0730-0301.

[NSD94] Efficient Re-rendering of Naturally Illuminated Environments, Fifth Eurographics Workshop on Rendering (Darmstadt, Germany), 1994, pp. 359—373, Springer-Verlag, isbn 3-540-58475-7.

[OK01] Image detection under varying illumination and pose, Proceedings of the Eighth IEEE International Conference On Computer Vision ICCV'01, 2001, pp. 668—673, IEEE, isbn 0-7695-1143-0.

[PD03] Wavelet environment matting, EGRW '03: Proceedings of the 14th Eurographics workshop on Rendering (Aire-la-Ville,Switzerland), 2003, pp. 157—166, Eurographics Association, isbn 3-905673-03-7.

[RH01] An efficient representation for irradiance environment maps, SIGGRAPH 01: Proceedings of the 28th annual conference on Computer graphics and interactive techniques, 2001, pp. 497—500, ACM Press, New York, NY, USA, isbn 1-58113-374-x.

[SHHS03] Clustered principal components for precomputed radiance transfer, ACM Trans. Graph., (2003), no. 3, 382—391, issn 0730-0301.

[SK00] A review of image-based rendering techniques, IEEE/SPIE Visual Communications and Image Processing (VCIP), 2000, pp. 2—13, EEE/SPIE, isbn 0-8194-3703-4.

[SKS02] Precomputed radiance transfer for real-time rendering in dynamic, lowfrequency lighting environments, SIGGRAPH '02: Proceedings of the 29th annual conference on Computer graphics and interactive techniques, 2002, pp. 527—536 ACM Press, New York, NY, USA, isbn 1-58113-521-1.

[SVLD03] Interactive rendering with bidirectional texture functions, Computer Graphics Forum, (2003), no. 3, 463—472, issn 1467-8659.

[TSED04] Unlighting the parthenon, SIGGRAPH 2004 Sketch, 2004.

[TZL02] Synthesis of bidirectional texture functions on arbitrary surfaces, SIGGRAPH '02: Proceedings of the 29th annual conference on Computer graphics and interactive techniques, 2002, 665—672, ACM Press, New York, NY, USA, isbn 1-58113-521-1.

[VT04] Tensortextures: multilinear image-based rendering, ACM Trans. Graph., (2004), no. 4, 336—342, issn 0730-0301.

[WH04] Image-based relighting: representation and compression, Integrated image and graphics technologies, pp. 161—180, Kluwer Academic Publishers, Norwell, MA, USA, The Kluwer International Series in Engineering and Computer Science, 2004, isbn 1-4020-7774-2.

[WHF01] Interactive relighting of panoramas, IEEE Comput. Graph. Appl., (2001), no. 2, 32—41, issn 0272-1716.

[WHON97] Image-based rendering with controllable illumination, Proceedings of the Eurographics Workshop on Rendering Techniques '97, pp. 13—22, 1997, Springer-Verlag, isbn 3-2183001-4.

[WTL05] All-frequency interactive relighting of translucent objects with single and multiple scattering, ACM Trans. Graph., (2005), no. 3, 1202—1207, issn 0730-0301.

[ZC01] Generalized plenoptic sampling, AMP01-06, Carnegie Mellon Technical Report, 2001.

[ZC03] Spectral analysis for sampling image-based rendering data, IEEE Transactions on Circuits and Systems for Video Technology, (2003), no. 11, 1038—1050, issn 1051-8215.

[ZC04] A survey on image-based rendering: Representation, sampling and compression, SPIC, (2004), no. 1, 1—28.

[ZWCS99] Environment matting and compositing, SIGGRAPH '99: Proceedings of the 26th annual conference on Computer graphics and interactive techniques, ACM Press/Addison-Wesley Publishing Co., 1999, pp. 205—214, isbn 0-201-48560-5.

[1] http://www.debevec.org/Research/LS/ by Paul Debevec, visited December 2nd, 2008

[2] http://www.cs.kuleuven.be/~graphics/CGRG.PUBLICATIONS/RW4DILF/ by Vincent Masselus, Pieter Peers, Philip Dutré, and Yves D. Willems, visited December 3rd, 2008

[3] http://www1.cs.columbia.edu/CAVE/projects/world_eye/ by K. Nishino and S.K. Nayar, visited May 5th, 2009

Volltext ¶

-

Volltext als PDF

(

Größe:

4.9 MB

)

Volltext als PDF

(

Größe:

4.9 MB

)

Lizenz ¶

Jedermann darf dieses Werk unter den Bedingungen der Digital Peer Publishing Lizenz elektronisch übermitteln und zum Download bereitstellen. Der Lizenztext ist im Internet unter der Adresse http://www.dipp.nrw.de/lizenzen/dppl/dppl/DPPL_v2_de_06-2004.html abrufbar.

Empfohlene Zitierweise ¶

Biswarup Choudhury, and Sharat Chandran, A Survey of Image-based Relighting Techniques. JVRB - Journal of Virtual Reality and Broadcasting, 4(2007), no. 7. (urn:nbn:de:0009-6-21208)

Bitte geben Sie beim Zitieren dieses Artikels die exakte URL und das Datum Ihres letzten Besuchs bei dieser Online-Adresse an.